TL;DR:

- Misunderstanding software interoperability has led enterprises to waste hundreds of millions of dollars and years of progress, as many equate it with basic API integration. True interoperability enables systems to exchange data, interpret it correctly, and be meaningfully operational across multiple dimensions, requiring standards and ongoing management. Strategies such as standardized APIs, hub-and-spoke architectures, AI-assisted mapping, and balancing integration styles are essential for successful, scalable enterprise interoperability.

Misunderstanding what is software interoperability has cost enterprises hundreds of millions of dollars and years of lost momentum. Many IT leaders treat it as a synonym for basic integration, connecting two systems with an API and calling the job done. That framing is incomplete, and the gap between connectivity and true interoperability is where large-scale projects quietly collapse. This article clarifies the definition, distinguishes interoperability from integration, examines the real benefits and failure patterns, and gives you a practical framework for making interoperability work inside complex enterprise environments.

Key Takeaways

| Point | Details |

|---|---|

| Software interoperability defined | It enables meaningful data exchange and use between software systems, improving enterprise operations. |

| Integration vs interoperability | Interoperability requires standardization and meaningful data use beyond just connecting systems. |

| Clear business benefits | Interoperable systems reduce manual work by up to 40%, boosting efficiency and data accuracy. |

| Common project challenges | Large-scale efforts face high failure rates due to complexity, inconsistent data, and legacy systems. |

| Technical best practices | Standardized APIs, hub-and-spoke integration, and AI-driven automation enhance success and scalability. |

Understanding software interoperability: definition and importance

Having outlined the importance of interoperability, it is essential to grasp the precise meaning and scope of the term in enterprise settings. Most definitions start and stop at data exchange, but that only captures half the picture.

According to the Software Architecture Guild, interoperability is the capability for software systems or components to exchange data, interpret it correctly, and use it meaningfully, measured by accuracy, latency, and success rate of interactions in distributed environments. That last clause matters enormously. You can move data between two systems successfully and still have interoperability fail if the receiving system misinterprets a field, applies a different data type, or simply ignores a payload it does not recognize.

In enterprise contexts, interoperability in software systems spans multiple dimensions:

- Syntactic interoperability: Systems agree on data formats such as JSON, XML, or CSV so that a message sent from one system can be parsed by another without transformation errors.

- Semantic interoperability: Systems share a common understanding of what data means, not just how it is formatted. A field called “customer_id” in your CRM maps correctly to “client_reference” in your ERP, without a developer manually building that bridge each time.

- Organizational interoperability: Policies, governance, and workflows across teams and departments align to support consistent data use, which is often the weakest link in large organizations.

- Technical interoperability: Underlying protocols, APIs, and infrastructure are compatible enough to allow communication without proprietary workarounds.

Measuring interoperability requires you to look beyond successful API calls. Track the accuracy of data after transformation, the latency introduced by intermediary layers, and the rate at which cross-system transactions complete without error or manual correction. If any of those metrics are unhealthy, your systems are connected but not truly interoperable. For teams building or purchasing enterprise software, this distinction changes how you evaluate vendors, architect systems, and define success criteria. A deeper look at software integration concepts helps establish the right foundation before any architecture decision is made.

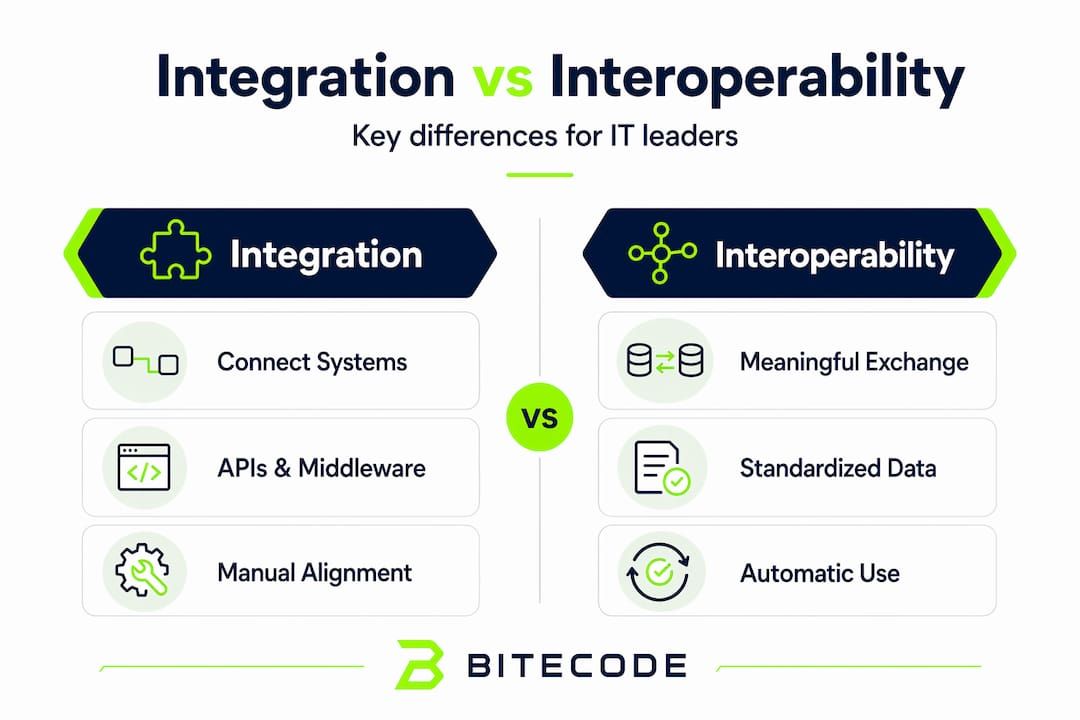

Key differences between integration and interoperability

To fully appreciate interoperability, you need to compare it directly with integration, a commonly conflated but distinct concept.

Per Gartner’s definition, integration links application functionality and data with IT infrastructure via APIs, web services, or middleware, while interoperability requires standards for meaningful exchange and use without manual reformatting. Integration is about connectivity. Interoperability is about comprehension. You can have integration without interoperability. You cannot have meaningful interoperability without some form of integration.

| Dimension | Integration | Interoperability |

|---|---|---|

| Primary goal | Connect systems | Enable meaningful, automated data use |

| Success metric | Successful data transfer | Accurate interpretation and consumption |

| Data handling | May require manual reformatting | Standards eliminate manual reformatting |

| Scalability | Often point-to-point, fragile at scale | Standards-based, designed to scale |

| Governance required | Low to moderate | Moderate to high |

The most instructive failure pattern in enterprise software integration concepts is teams that declare integration complete after building point-to-point connections, then discover six months later that downstream systems are silently consuming malformed data. That is integration without interoperability.

Key distinctions worth internalizing:

- Integration often serves a single use case; interoperability creates a reusable exchange layer.

- Interoperability demands shared standards, not just shared connections.

- Manual reformatting is a direct sign that interoperability has not been achieved.

- A well-designed interoperability layer allows new systems to join the ecosystem without rebuilding existing connections.

This distinction reshapes how teams should evaluate any system they acquire or build. Ask not just “can it connect?” but “can it understand and be understood?”

Benefits of interoperable software systems in enterprises

Having differentiated interoperability from integration, it is vital to explore the concrete advantages it offers enterprises.

The productivity impact is direct. Interoperable systems reduce employee task time by up to 40% through automation, eliminating manual data entry across CRM, marketing, and project tools. When a sales rep closes a deal, the order should flow automatically into inventory, finance, and fulfillment without a single copy-paste action. That is the baseline promise of interoperability, and it compounds significantly at enterprise scale.

The benefits of software interoperability extend beyond individual productivity:

- Real-time decision-making: When systems share data in near real-time, leadership teams gain visibility into operations that previously took days to surface via manual reporting.

- Reduced error rates: Eliminating manual data transfer eliminates the transcription errors that silently distort financial records, customer histories, and compliance logs.

- Faster onboarding of new tools: A genuinely interoperable architecture allows you to swap or add systems without rebuilding the entire data layer. This is the difference between a two-week deployment and a six-month project.

- Foundation for AI and automation: AI models require clean, consistent, and timely data. Interoperability is the prerequisite for any meaningful enterprise automation initiative. Without it, AI processes reflect the same data quality problems that humans were already managing manually.

- Vendor flexibility: Organizations locked into proprietary data formats lose negotiating power. Interoperability based on open standards keeps options open.

Teams that have implemented interoperable architectures report faster time-to-insight, lower operational overhead, and significantly reduced IT maintenance burden. For a practical look at how these gains translate into production systems, reviewing automation strategies for enterprise environments illustrates common patterns used to reach them.

Challenges and pitfalls in achieving software interoperability

While the benefits of interoperability are clear, it is equally important to understand why many projects face serious difficulties and fail.

The numbers are sobering. Large SAP migrations for interoperability in Fortune 500 companies take 3 to 5 years and cost $100 to $500 million, with 70% of such programs failing to deliver intended outcomes. These are not small projects managed by inexperienced teams. They are well-funded initiatives that underestimate the complexity hiding inside data schemas, business rules, and legacy code.

The most common failure points, in order of how frequently they are underestimated:

- Legacy system complexity: Older systems were not built with interoperability in mind. They use proprietary schemas, undocumented field relationships, and business logic embedded directly in the database layer rather than in a service boundary.

- Inconsistent data schemas: Two systems may both store “product price,” but one stores it pre-tax, one post-tax, and neither has documented which convention they use. Reconciling this manually across thousands of fields is where timelines collapse.

- Point-to-point proliferation: Early-stage integration often starts with direct system-to-system connections. As the number of systems grows, the number of connections grows quadratically, and a single field change in one system breaks every integration touching it.

- Inadequate testing: Compatibility testing is often deferred until after development, which means interoperability failures surface in production rather than in controlled environments.

- Organizational misalignment: Technical teams can build an interoperable system that business units refuse to adopt because data governance policies were not agreed upon in advance.

Pro Tip: Before any interoperability initiative begins, commission an audit of your top 10 most-used data fields across all systems involved. Inconsistencies found at that stage cost hours to resolve. Found in production, they cost months. For teams evaluating where to focus first, reviewing enterprise software best practices helps prioritize the highest-risk areas.

Technical approaches and best practices to enable interoperability

Understanding challenges sets the stage for exploring proven technical approaches to successfully achieve interoperability.

The Software Architecture Guild notes that achieving interoperability depends on tactics confronting heterogeneity like standardization on REST APIs and JSON, adapters for legacy systems, and rigorous testing, while recognizing the trade-offs involved. No single tactic solves every scenario. The key is combining approaches based on what each system in your environment requires.

Core technical practices:

- Standardize on REST APIs and JSON for new systems: This is the lowest-friction starting point. Any new system acquired or built should expose RESTful endpoints by default and consume standardized JSON payloads.

- Use adapters and wrappers for legacy systems: Legacy systems that cannot be modified directly can be wrapped in an adapter layer that translates their proprietary output into a standard format. This extends their lifespan without requiring a full replacement.

- Implement a hub-and-spoke integration layer: Rather than connecting every system to every other system directly, route all data through a central hub. Transformations, validation, and error handling happen in one place, which means a field change in one system requires a single update to the hub rather than changes across every downstream connection.

- Conduct compatibility and performance testing throughout development: Not only at the end. Interoperability failures are dramatically cheaper to fix during development than after deployment.

- Use iPaaS platforms for orchestration: Integration Platform as a Service tools manage complex, multi-step workflows without requiring custom middleware code for every new use case.

| Approach | Best for | Trade-off |

|---|---|---|

| REST API standardization | Greenfield systems | Requires discipline on new procurement |

| Adapter/wrapper layer | Legacy systems | Adds latency and maintenance overhead |

| Hub-and-spoke integration | Multi-system enterprises | Hub becomes a critical dependency |

| iPaaS orchestration | Complex workflow management | Vendor lock-in risk if not abstracted |

Pro Tip: When selecting an iPaaS platform, verify that it supports your existing authentication protocols, data formats, and error-handling standards before committing. Changing platforms mid-project relocates complexity into the vendor relationship rather than eliminating it. Teams building these architectures will find value in reviewing how to integrate automation modules effectively, as well as exploring workflow automation patterns that work at enterprise scale.

Strategic considerations: balancing data integration styles for interoperability

With technical tactics addressed, enterprises must also consider strategic style decisions in data integration to maximize interoperability effectiveness.

Gartner identifies three primary integration styles, and mismatched approaches waste effort on specific use cases. Choosing the wrong style is a common and costly mistake that does not always surface until a system is under production load.

- Data-centric integration: Focuses on batch processing and data flows between systems. Well-suited for nightly syncs, reporting pipelines, and warehouse-to-ERP transfers where real-time latency is not required.

- Event-centric integration: Built around asynchronous event handling. When a customer places an order, an event fires and downstream systems react independently. This supports real-time responsiveness without tight coupling between systems.

- Application-centric integration: Tightly couples specific application workflows for particular user-facing scenarios. Useful for purpose-built processes like quote-to-cash or employee onboarding, but poor at generalizing across other use cases.

The mistake most teams make is defaulting to one style across their entire enterprise environment. A data warehouse sync does not need event-driven architecture. A customer-facing order workflow cannot rely on nightly batch processing. Matching the integration style to the use case is a strategic decision, not a technical one. Teams managing complex enterprise automation processes will recognize this pattern as the underlying reason why so many automation projects underdeliver despite technically sound execution.

Why many interoperability initiatives fail and how to succeed

Here is the uncomfortable pattern that most post-mortems do not name directly: interoperability projects fail not because teams lack technical skill, but because they underestimate how complexity scales.

Point-to-point integration feels manageable at five systems. At fifteen, it becomes brittle. At thirty, it becomes unmanageable. As one analysis of mid-market integration patterns notes, point-to-point integration scales poorly and hub-and-spoke patterns centralize transformation and error handling to avoid breakage from field changes. Teams that start with point-to-point connections are not making a mistake in the short term. They are deferring a structural problem until it arrives with interest.

The second failure pattern is treating interoperability as a one-time project rather than a continuous architectural practice. Systems change. Business rules evolve. New acquisitions bring new data models. Organizations that build interoperability as a capability rather than a deliverable are far better positioned to absorb this ongoing change without crisis.

The genuinely counterintuitive shift emerging in 2026 is the role of AI in this space. Modern frontier models can read custom enterprise code, including obscure dialects like ABAP, automating mapping tasks that were previously manual. That changes the economics of legacy system migration significantly. Tasks that once required teams of specialized consultants over multi-year engagements can now be partially automated, compressing timelines and reducing cost. This does not eliminate the need for human judgment, particularly around business logic and governance decisions. But it does mean that the timeline and cost figures that made interoperability initiatives feel prohibitive are shifting.

The practical advice is this: adopt AI-assisted mapping and testing tools early in any interoperability initiative, not as an afterthought. Use hub-and-spoke architecture from the start, even if it feels over-engineered for your current scale. And treat your software best practices framework as a living document, not a one-time checklist. The organizations that succeed at interoperability are those that build it into their architecture culture, not just their project plans.

How Bitecode supports your interoperability goals

After working through the theory and strategy of interoperability, the practical challenge remains: how do you accelerate implementation without accumulating technical debt or building fragile connections that break under load?

Bitecode is built specifically for this challenge. The platform’s modular architecture means enterprises can deploy pre-built components covering AI automation, workflow orchestration, and financial processing without starting from scratch on the integration layer. The AI assistant module accelerates data mapping, schema reconciliation, and workflow automation tasks that typically consume the most time in interoperability projects. For enterprises managing financial transactions across systems, the blockchain payment system enables secure, standards-based transactions that are inherently interoperable across participating nodes. Projects start with up to 60% of the baseline system pre-built, which means your team focuses on business-domain complexity rather than boilerplate infrastructure.

Frequently asked questions

What is the main difference between software integration and interoperability?

Integration links application functionality and data via APIs or middleware, while interoperability requires standardized, meaningful data exchange without manual intervention, ensuring systems can cooperate automatically rather than just communicate.

Why do large-scale interoperability projects often fail?

70% of large programs fail to deliver intended outcomes because of complex legacy systems, inconsistent data schemas, multi-year timelines, high costs, and inadequate planning for how integration complexity scales across dozens of connected systems.

How can enterprises improve success rates in achieving software interoperability?

Enterprises improve outcomes by adopting standardized REST APIs, using hub-and-spoke integration architectures, implementing orchestration platforms, and applying AI-assisted automation for schema mapping and compatibility testing from the start of the project.

What role does AI play in modern interoperability projects?

AI automates the mapping of diverse schemas and can interpret legacy code in obscure dialects, reducing manual mapping tasks that previously required specialist consultants over years, compressing both timelines and cost for enterprise integration initiatives.

What integration styles should be balanced for effective data interoperability?

Balancing data-centric, event-centric, and application-centric integration styles based on specific use cases avoids wasted effort and ensures data is delivered and consumed in the format and timing each workflow actually requires.