TL;DR:

- Enterprise software integration is a complex organizational effort that requires careful orchestration of data, processes, and governance beyond simple technical wiring. Choosing appropriate patterns and establishing robust data governance are critical to avoid fragile dependencies and escalating risks, especially with AI-generated automation. Effective integration depends on aligning technology choices with clear business objectives, cross-team collaboration, and proactive risk management strategies.

Enterprise software integration is one of the most consequential decisions an IT organization will make, yet it is routinely underestimated. Leaders who treat it as straightforward configuration, connecting System A to System B, often discover months later that they have built a fragile tangle of dependencies that slows innovation and amplifies risk. The real challenge is not technical wiring. It is orchestrating data, processes, governance, and people across a complex digital ecosystem that was never designed to speak a common language. This guide breaks down the core mechanics, proven patterns, leading failure modes, and actionable strategies that separate successful integrations from expensive lessons.

Key Takeaways

| Point | Details |

|---|---|

| Integration is strategic | Enterprise software integration connects business processes, systems, and data holistically—it’s much more than linking apps. |

| Pattern choice is critical | Selecting the right integration methodology (point-to-point, ESB, API-led, etc.) directly impacts scalability and risk. |

| Pitfalls are common | Most failures result from ignoring business alignment, underestimating governance, and quick patchwork solutions. |

| Adopt best practices | Commit to governance, phased rollouts, and clear monitoring to reduce risk and maximize ROI. |

| Technology must serve business | Success is rooted in business transformation and culture, not just technical execution. |

Defining enterprise software integration

Enterprise software integration is the practice of connecting distinct applications, data sources, and automated workflows so that an organization functions as a single, coherent digital ecosystem rather than a collection of isolated tools. This definition matters because it frames integration not as a feature to be toggled on, but as architectural work that spans the entire business stack. An ERP system exchanging data with a CRM, a logistics platform triggering financial settlements, or a compliance engine reading audit logs in real time: all of these require the same underlying discipline.

The integration strategies and ROI conversation cannot begin without understanding what the four core mechanics actually are. Core mechanics involve data transformation (mapping formats and schemas), protocol translation, message routing, and error handling to bridge heterogeneous systems with differing data models and interfaces. Each mechanic carries its own complexity tax:

- Data transformation converts proprietary schemas, say a JSON payload from a SaaS CRM, into an XML structure expected by a legacy ERP. Mismatched field mappings are a leading cause of silent data corruption.

- Protocol translation bridges systems that speak fundamentally different languages, whether REST, SOAP, AMQP, or EDI. Without it, modern cloud services and on-premise systems simply cannot communicate.

- Message routing determines which system receives which data, at what time, under what conditions. A routing error in a financial workflow can mean duplicate payments or missed reconciliations.

- Error handling defines what happens when a message is malformed, a service is unavailable, or a timeout occurs. Most organizations under-invest here and pay the price during production incidents.

Together, these mechanics form the backbone of digital transformation, enabling cross-system automation, unified analytics, and regulatory compliance. Organizations that design them thoughtfully gain speed and resilience. Those that treat them as afterthoughts accumulate what engineers call integration debt, technical obligations that compound over time and eventually block strategic initiatives.

Common integration patterns and methodologies

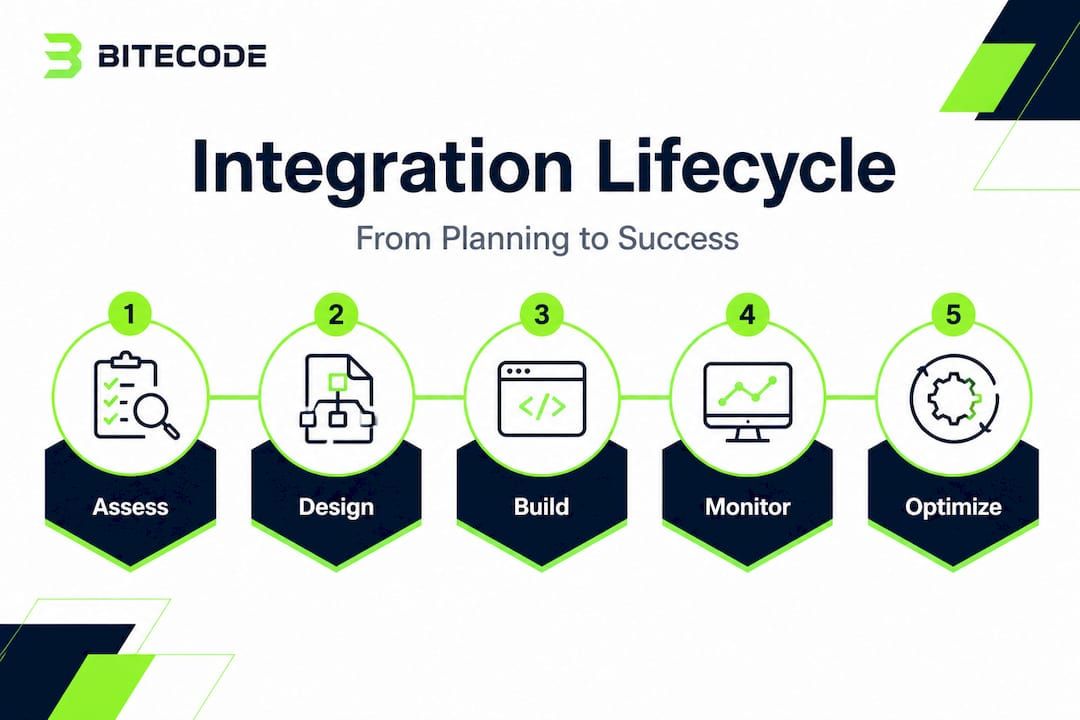

Knowing what integration is sets the foundation. Knowing how to architect it determines whether your investment pays off or creates new problems. Five dominant patterns have emerged over the past two decades, each suited to different organizational contexts, team sizes, and cloud adoption levels.

Key methodologies and patterns include point-to-point (simple but scales poorly to N² connections), Hub-and-Spoke/ESB (centralized routing and transformation), Event-Driven (decoupled via pub/sub for real-time scenarios), API-led (stable contracts via gateways), and iPaaS (cloud-native for hybrid and multi-cloud environments). The table below maps each pattern to its strengths, ideal use cases, and primary challenges.

| Pattern | Key strength | Best suited for | Main challenge |

|---|---|---|---|

| Point-to-point | Fast to implement | Small teams, 2-3 systems | Scales to N² connections; becomes unmanageable |

| Hub-and-Spoke/ESB | Centralized governance | Large enterprises with standard data flows | Central bottleneck; heavyweight to maintain |

| Event-driven | Real-time, decoupled | High-volume, asynchronous workflows | Observability complexity; event ordering |

| API-led | Stable contracts | Customer-facing integrations, microservices | Requires mature API governance and versioning |

| iPaaS | Pre-built connectors | Cloud and SaaS heavy environments | Vendor dependency; limited custom logic |

Selecting the right pattern is not a purely technical decision. Organizations with fewer than 10 integrated systems and limited DevOps capacity often benefit from an API-led approach, which provides clear contracts without imposing the operational overhead of a full ESB. Enterprises running dozens of legacy systems alongside modern cloud platforms frequently need a hybrid strategy: an event-driven backbone for high-throughput workflows and an API gateway layer for external-facing integrations. Examining cloud solution integration examples from comparable industries can reveal how others have navigated this trade-off before committing to a single architecture.

Reviewing best practices for enterprise software efficiency alongside your pattern selection is equally important. Efficiency gains from integration are only sustained when the architectural pattern aligns with the organization’s actual operational tempo and governance maturity.

Pro Tip: When evaluating patterns, design for the system you will have in three years, not just the one you have today. Organizations that choose a modular foundation, loosely coupled components with well-defined interfaces, preserve architectural agility without needing to rebuild from scratch as systems evolve.

Integration pitfalls and risk management

With integration patterns mapped, teams need to understand where even well-designed efforts can unravel. The failure modes are not random. They follow predictable patterns rooted in architectural shortcuts, cultural blind spots, and governance gaps.

Contrasting viewpoints show point-to-point as quick but fragile (spaghetti integrations), centralized ESB as scalable but a bottleneck risk, iPaaS as agile for cloud but with legacy challenges, AI-generated integrations as failing on edges and security with 62% vulnerabilities, and over-engineering simple needs as a waste of resources.

The most commonly underestimated risk category in 2026 is AI-assisted integration. Generative AI tools can produce integration logic rapidly, and that speed is genuinely valuable. However, organizations that deploy AI-generated connectors without rigorous review are putting risk into production at speed. Edge cases, unusual data payloads, timeout scenarios, and security boundary conditions are exactly where AI-generated code is most likely to fall short. A 62% vulnerability rate at edge conditions is not a theoretical concern; it is a measurable operational risk that demands structured code review and automated security testing as mandatory gates.

“Most integration failures are not caused by choosing the wrong technology. They are caused by treating integration as a technology problem while ignoring the organizational and governance context in which it runs.”

The bullet list below captures the most critical risk factors and their associated mitigation levers:

- Spaghetti point-to-point connections: Each new system multiplies the connection count quadratically. Mitigation requires establishing a maximum threshold for direct integrations before mandating a hub or gateway.

- ESB single points of failure: Centralized ESB architectures are powerful but introduce bottlenecks. Mitigation requires active-active clustering and circuit breaker patterns.

- Ungoverned AI-generated integrations: Speed of generation does not equal production readiness. Mitigation requires mandatory security review, integration testing, and edge case cataloging before deployment.

- Data governance absence: When integration is treated as purely technical, data ownership, quality, and lineage are ignored. Mitigation requires assigning data stewards to every integration project from day one.

- Siloed project ownership: Integration that lives only in the IT department fails to account for business process changes. Mitigation requires cross-functional steering from business, compliance, and operations.

For teams managing integration risk best practices, establishing integration-specific monitoring at system boundaries is non-negotiable. Application performance monitoring (APM) tools designed for single-system observability miss cross-system failure patterns. Boundary monitoring, tracking latency, error rates, and data integrity at every integration point, is a distinct discipline that requires deliberate tooling and ownership.

Organizations running automated workflows at scale should also review security in automation as a separate discipline. Automation amplifies both efficiency and vulnerabilities. A single misconfigured automation touching financial data can propagate errors across dozens of downstream systems before any human detects it.

Pro Tip: Scale your governance and monitoring practices at the same rate as your technical stack. Every new integration added without a corresponding governance artifact, an owner, a data contract, an error recovery plan, increases the organization’s systemic risk profile even when the integration itself is well-built.

Best practices for successful integration

Knowing what not to do sets the stage for proven practices that maximize integration ROI and resilience. The following numbered list reflects field-tested strategies that consistently distinguish successful programs from stalled ones.

- Treat integration as a business problem first. Define the business outcome before specifying any technical solution. What decision, process, or customer experience does this integration enable? Answering this question prevents over-engineering and keeps scope manageable.

- Establish a roadmap before the first line of code. A phased roadmap that sequences integrations by business value and technical dependency reduces the risk of architectural regret. High-value, low-complexity integrations should come first to build organizational confidence and governance muscle.

- Formalize data governance from day one. Define data ownership, quality standards, transformation rules, and lineage documentation as deliverables, not afterthoughts. Integration’s impact on ROI is directly correlated with data quality: clean data flowing between systems compounds value; dirty data compounds errors.

- Execute a phased rollout with checkpoint gates. Deploy in controlled increments, each with defined success criteria and rollback procedures. This approach reduces blast radius when issues arise and allows teams to course-correct before they are fully committed.

- Monitor integration boundaries differently from application health. Invest in dedicated boundary monitoring that tracks inter-system latency, message throughput, error rates, and data fidelity. These metrics are invisible to standard APM tools.

- Apply low-code tools with explicit guardrails. Low-code empowers non-IT but needs oversight for edge cases. Set clear boundaries on where business users can build integrations autonomously versus where engineering review is mandatory.

Hybrid integration environments, those mixing on-premise legacy infrastructure with cloud-native SaaS platforms, deserve special attention. Hybrid takes 56% longer than greenfield cloud integrations on average, and that duration premium is often invisible in initial project estimates. Organizations that plan for it avoid budget and schedule overruns. For further context on structuring hybrid projects for success, reviewing frameworks on efficient project delivery can help teams set realistic expectations.

The table below maps common integration risk scenarios to recommended controls, giving teams a practical starting reference.

| Risk scenario | Recommended control |

|---|---|

| Legacy system with proprietary data format | Dedicated transformation layer with versioned schema registry |

| Cloud migration of business-critical workflow | Parallel-run period with reconciliation reporting before cutover |

| Security exposure in API-led integration | OAuth 2.0 enforcement, API gateway rate limiting, penetration testing |

| AI-generated connector in production | Mandatory code review gate, edge case test suite, staged deployment |

| Low-code integration by business team | Governance review board approval, automated anomaly alerting |

Using the software selection checklist when evaluating platforms and tooling ensures that capability gaps are surfaced before contracts are signed, not after go-live when switching costs are prohibitive.

Why most integration advice misses the business reality

Most published guidance on enterprise integration focuses almost entirely on technology selection: which ESB to choose, which iPaaS platform to license, which API gateway to deploy. This framing is understandable because technology is concrete and describable. But it fundamentally misidentifies where most integration programs fail.

Failed integrations trace back, almost universally, to cross-team misalignment and absent data governance. A technically sound event-driven architecture built by a team that has no shared agreement on who owns the data, who resolves conflicts, and what happens when business rules change is an expensive liability. The platform is irrelevant. The organizational conditions determine the outcome.

Tech leaders who consistently succeed at integration treat it as a business transformation investment from the first conversation. They involve business process owners, compliance teams, and finance stakeholders before a single vendor is evaluated. They frame integration decisions around business outcomes, such as reducing order-to-cash cycle time or enabling real-time regulatory reporting, rather than around technical capabilities. This framing changes the governance conversation entirely. When integration has a measurable business owner, accountability, prioritization, and budget defense all become more straightforward.

The rise of AI and automation makes the human and process side of integration more consequential, not less. Automation can compress execution time from hours to milliseconds, which means a governance gap that once produced a slow, detectable error now produces a fast, systemic one. Organizations that invest in cultural alignment around data ownership and strategic integration pitfalls will find that their technology choices matter far less than their governance choices. The platform does not save you from organizational dysfunction. Good governance does.

Accelerate integration with next-gen solutions

Building a well-governed, scalable integration architecture is demanding work, and it is far more achievable when the foundational components are already in place before the first sprint begins. Bitecode’s modular platform addresses this directly, enabling organizations to start with up to 60% of the baseline system pre-built and then customize rapidly to match their specific workflows, compliance requirements, and data models.

For enterprises pursuing AI-driven automation, the AI Assistant Module provides a ready-to-deploy foundation that accelerates intelligent workflow integration without introducing unreviewed, AI-generated code into production. For organizations that need secure, auditable financial processing across distributed systems, the Blockchain payment system module delivers cryptographic integrity and transaction transparency as a configurable component. Both capabilities are designed to integrate cleanly with existing enterprise stacks, reducing the time from strategy to production-grade deployment.

Frequently asked questions

What are the biggest risks with enterprise software integration?

The primary risks are fragile point-to-point connections, security gaps in AI-generated automations, and treating integration as a purely technical challenge rather than a business and governance problem.

How do you choose the best integration pattern for your business?

Factor in system count, team maturity, cloud adoption, and governance readiness, then match against the five core patterns (point-to-point, ESB, event-driven, API-led, iPaaS) to find the best fit for your specific scenario.

What percentage of AI-driven integrations introduce vulnerabilities?

62% of AI-generated integrations result in edge or security vulnerabilities when deployed without structured code review and edge case testing protocols.

Is low-code a good approach for enterprise integration?

Low-code accelerates delivery and empowers business users, but needs oversight for edges and requires clear governance boundaries to prevent ungoverned integrations from entering production and creating downstream risk.