TL;DR:

- Many enterprise failures stem from rigid architectures that hinder adaptation rather than from poor code. Modular software built on self-contained, standardized components enhances fault isolation, accelerates updates, and enables easier integration of technologies like AI and blockchain. However, successful adoption requires disciplined boundary management, organizational readiness, and staged implementation to avoid operational complexity and chaos.

Most enterprise software failures don’t start with bad code. They start with rigid architecture that made perfect sense at launch and became a liability the moment the business needed to change. The uncomfortable reality is that many organizations are still running monolithic systems that treat every component as a single, indivisible block, making even small updates a high-stakes exercise. Could modular software be the quiet force behind the world’s most adaptive enterprises? The evidence strongly suggests yes, and the case for shifting strategy is both urgent and practical for any organization serious about operational resilience and technology integration.

Key Takeaways

| Point | Details |

|---|---|

| Isolation for reliability | Modular software reduces operational risks by isolating failures and making upgrades targeted and safe. |

| Faster tech adoption | With modularity, adding AI, blockchain, or other advanced tools becomes quicker and less disruptive. |

| Efficiency gains | Maintenance, updates, and integration processes are streamlined, saving time and costs at scale. |

| Mind the complexity trade-off | More modules can mean more operational overhead, so organizations must balance flexibility with manageability. |

Why modular software? The strategic context

Now that we’ve set the stage for the need to evolve, let’s break down what modularity means and why it’s central to modern enterprise strategy.

Modular software is built from discrete, self-contained components that each handle a specific function and communicate through standardized interfaces. Think of it as a professional Lego system for IT: each block is purpose-built, snaps in without disrupting the rest of the structure, and can be swapped or upgraded independently. This analogy isn’t just illustrative. It reflects how modular monoliths work in practice, where well-bounded domains coexist within a single deployable unit before teams are ready to distribute further.

Monolithic architectures, by contrast, bundle all functionality into one tightly coupled codebase. Updating one module often means retesting and redeploying the entire system. As organizations grow, this design introduces cascading risks: a bug in the payment module can freeze the reporting service. A scaling decision for one feature carries costs for every unrelated function. The blast radius of any failure is effectively the entire application.

The operational case for modularity is well-documented. As recent research on modular systems confirms, modular approaches improve fault isolation and targeted evolution by enforcing boundaries between components, which can reduce the blast radius of failures and speed up maintenance. That translates directly into fewer emergency deployments, shorter incident windows, and more predictable release cycles.

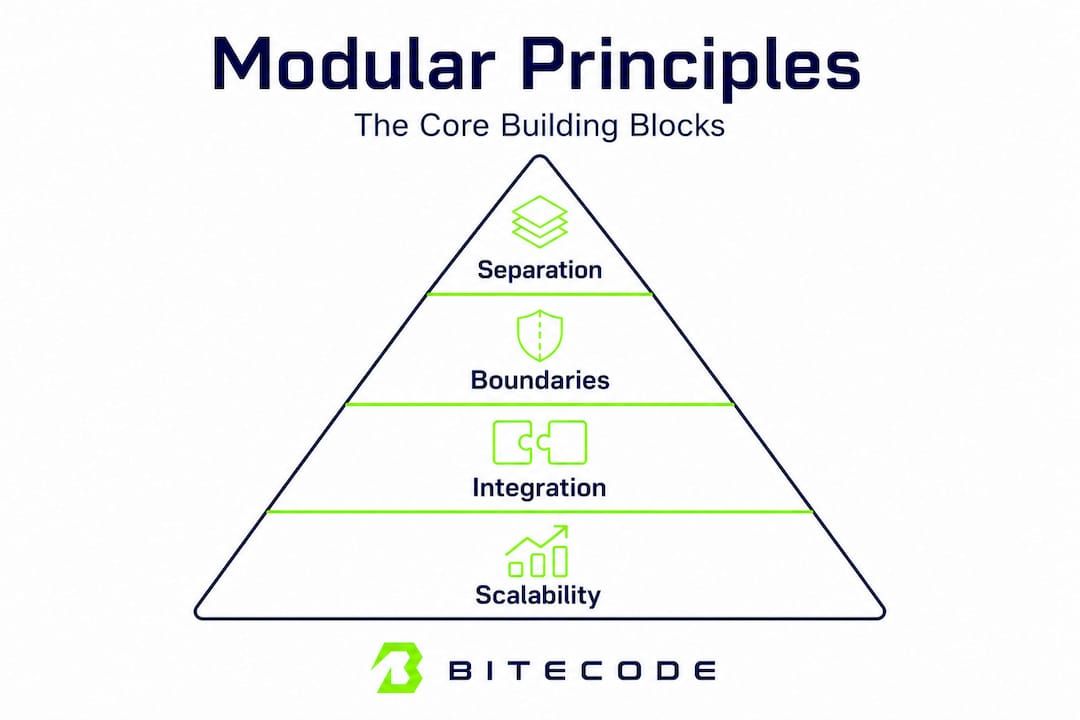

The core advantages break down as follows:

- Maintainability: Teams can modify a single module without risk to unrelated services.

- Quick updates: Isolated deployments mean faster release cycles with less regression testing overhead.

- Targeted scaling: Resource-intensive components scale independently, avoiding over-provisioning across the entire system.

- Technology integration: New tools, APIs, and advanced capabilities plug in at the module level rather than requiring a system-wide refactor.

These aren’t theoretical gains. Organizations following enterprise software best practices consistently find that modular design reduces both the cost and the risk of change over time.

Anatomy of modular software: Building blocks and boundaries

Understanding the “why” behind modularity leads us to the “how.” What actually defines a modular system, and how does this impact your IT operations day to day?

Three core principles define genuine modularity. Separation of concerns ensures each component handles one distinct domain, whether that’s authentication, billing, or reporting. Standardized interfaces allow modules to communicate through defined contracts, typically APIs or event streams, without exposing internal logic. Encapsulation keeps the inner workings of each module private, so changes inside one component don’t ripple unpredictably through others. As WJARR 2025 research demonstrates, modular architecture enables reusability, scalability, and enhanced fault isolation via separation of concerns and standardized interfaces.

The table below maps key modular features to their operational impact:

| Modular feature | Operational benefit |

|---|---|

| Plug-and-play integration | Add new capabilities without system-wide changes |

| Parallel development | Multiple teams work simultaneously on separate modules |

| Isolated deployments | Reduce regression risk and release cycle time |

| Standardized interfaces | Simplify vendor and third-party integrations |

| Encapsulated logic | Easier debugging and faster root-cause analysis |

For organizations looking to modularize an existing system, a staged approach works far better than a big-bang rewrite:

- Audit the existing codebase to identify natural domain boundaries and high-coupling hot spots.

- Define the interfaces first before extracting any component. Contracts must be clear before code moves.

- Extract the least-coupled components initially, typically logging, notifications, or authentication services.

- Establish independent deployability for extracted modules before moving to core business logic.

- Iterate inward toward the most complex domains once confidence in the process builds.

Pro Tip: Resist the urge to distribute everything at once. Modular workflow design works best when boundaries are clear before you add the complexity of network hops and distributed state.

Consider a real-world scenario: a financial services firm wants to layer an AI development module into their transaction monitoring workflow. In a modular system, the AI service integrates through a defined event interface. The billing module sees no change. The compliance reporting module continues uninterrupted. The rollout takes weeks, not quarters, because the boundary work was done up front.

Modular software in action: Efficiency, AI, and blockchain integration

Once we understand the structure and benefits, it’s crucial to see the real-world impact. How does modularity unlock new capabilities for enterprises embracing technologies like AI and blockchain?

The contrast between modular and monolithic approaches becomes sharpest when organizations try to adopt new technologies. Consider the comparison below:

| Integration type | Modular system | Monolithic system |

|---|---|---|

| AI assistant module | Plug in via API, isolated testing, 2-6 week rollout | Requires core refactor, high regression risk, 3-9 months |

| Blockchain payment layer | Add as independent service, parallel development possible | Touches payment and ledger logic simultaneously, high risk |

| CRM upgrade | Swap module without touching other domains | Data model changes cascade across the system |

| Compliance update | Targeted change in compliance module only | Review and retest entire system for audit trail |

These differences aren’t marginal. They define whether an organization can respond to market shifts in weeks or get stuck in multi-month planning cycles.

For AI adoption specifically, modular design removes the most common blocker: the fear that integrating a new capability will destabilize core operations. With well-defined boundaries, an AI inference module can be added, tested in isolation, and rolled back if needed, all without touching the billing or reporting layers. The enterprise software trends most relevant to 2026 consistently identify this isolation capability as a primary driver of faster AI adoption in large organizations.

Blockchain integration benefits similarly. When a blockchain payment layer is designed as a standalone module with a standard interface to the payment service, organizations can upgrade consensus mechanisms or switch underlying protocols without a system-wide audit. As modular systems research confirms, organizations evolving toward leading-edge technologies like AI and blockchain benefit most when those capabilities can be introduced without costly rework.

The efficiency metrics that enterprises report after modular adoption are notable:

- Reduction in mean-time-to-recovery (MTTR) during incidents, often by 40 to 60 percent

- Shorter deployment cycles, moving from bi-monthly to weekly or daily releases

- Lower regression testing costs due to isolated change scope

- Faster compliance and automation responses when regulations shift

That last point deserves emphasis. Regulatory compliance is one of the most underestimated use cases for modular design. When a new data privacy requirement lands, a modular system allows teams to identify exactly which components handle personal data, update only those modules, and produce targeted audit documentation. A monolithic system requires a far broader review with correspondingly higher risk and cost.

Nuance in modularity: Complexity, compliance, and organizational realities

Modular software design isn’t without its trade-offs. In complex enterprises, a nuanced approach is essential to avoid swapping one set of challenges for another.

The first and most important nuance is that distributed modularity relocates complexity rather than eliminating it. When components communicate across network boundaries, teams must manage service discovery, distributed tracing, latency, and failure modes that don’t exist in a single-process application. As IJIRMPS 2025 research notes, distributed boundaries can shift complexity into operations, including observability, deployment orchestration, and debugging across modules, so some organizations benefit from starting with a modular monolith to gain boundary discipline without the distributed-system overhead until scaling demands it.

The hidden operational costs of premature distribution include:

- Observability tooling: Distributed tracing and centralized logging become non-negotiable, not optional.

- Orchestration overhead: Container scheduling, service meshes, and deployment pipelines add engineering maintenance burden.

- Cross-module debugging: Tracing a failure across five services is inherently harder than reading a single stack trace.

- Data consistency management: Distributed transactions require careful design to avoid subtle correctness bugs.

- Increased onboarding complexity: New engineers must understand network contracts and failure modes, not just business logic.

Compliance adds another layer. When modularity must satisfy external certification requirements, as seen in regulated industries, decision-makers should evaluate the full supply chain of tooling ownership, interface documentation quality, and test throughput, because as MOSA implementation practice demonstrates, these factors can dominate real agility gains if left unmanaged.

Pro Tip: If your organization doesn’t yet have mature observability practices, a modular monolith is the right starting point. Extract services only when a specific scaling or isolation need justifies the operational overhead. Discipline at the boundary is more valuable than distribution for its own sake.

Before committing to a full modular transformation, organizations should work through this decision checklist:

- Does the current team have experience managing distributed systems or microservices?

- Is observability tooling already in place and actively maintained?

- Does the compliance framework require documentation at the interface level?

- Is there a genuine scaling or team-autonomy need that modularity will address?

- Is there executive support for the organizational change that modular adoption requires?

The software selection steps that successful organizations follow always include an honest audit of organizational maturity, not just technical ambition.

Why the rush to modular software sometimes backfires

With these nuances in mind, it’s time for an opinion grounded in experience about what really makes modular software adoption succeed or fail in the enterprise.

The pattern we see most often isn’t a failure of technology. It’s a failure of discipline. Organizations hear “modular” and interpret it as permission to distribute everything immediately, spinning up dozens of independently deployed services before they have the processes, tooling, or team structure to support them. The result isn’t agility. It’s a distributed monolith: all the operational complexity of microservices with none of the boundary clarity that makes modularity valuable.

The uncomfortable lesson is that modularity is a lever, not a destination. Pulling the lever without a clear strategy and staged adoption plan amplifies whatever organizational dysfunction already exists. Teams that lack clear ownership before modularization end up with modules that no one fully owns after it. As the practical principle goes: many organizations chase flexibility and end with chaos. Discipline at the boundaries defines success.

The change management dimension is equally underestimated. Modular adoption requires teams to think differently about ownership, interface contracts, and inter-module communication. That’s a cultural shift as much as a technical one. Organizations that treat it purely as an architecture decision, ignoring the human and process side, consistently struggle more than those that invest in clear documentation and module ownership from day one.

The comparison between a bespoke software partner and a large agency often reveals this gap clearly: a partner with deep modular experience will insist on boundary documentation and ownership clarity before writing a line of code. An agency optimizing for speed will hand over a distributed system that the client’s team is unprepared to operate.

Pro Tip: Before any modular transformation begins, assign explicit ownership for every module boundary, including who documents the interface, who monitors it in production, and who approves breaking changes. Without this, “modular” becomes a synonym for “nobody’s problem.”

The decision-makers who succeed with modularity audit their real organizational needs first. They ask whether the pain they’re solving is actually an architecture problem or a process and ownership problem. Often, the answer is both, and the architecture choice must be matched with the organizational investment to support it.

Explore modular solutions for your enterprise

Ready to take the next step toward smarter, more agile software? These resources and solutions make it easier to start modular without the runaway risks.

Bitecode.tech builds enterprise systems using a modular foundation that starts with up to 60 percent of the baseline system pre-built, giving organizations a head start on both architecture discipline and delivery speed. Whether the priority is an AI assistant module for intelligent workflow automation, a blockchain payment solution for transparent financial processing, or a custom CRM module tailored to specific sales and service workflows, each component is designed with clear boundaries and standardized interfaces from day one.

The modular approach means organizations aren’t locked into a single vendor’s monolithic platform. Instead, they get the flexibility to integrate, scale, and evolve each capability independently, with the discipline and documentation that prevents modular meltdown. Consulting with the Bitecode.tech team starts with an honest assessment of organizational readiness and a staged roadmap that matches ambition with operational reality.

Frequently asked questions

What is modular software in enterprise IT?

Modular software is built from independent components that interact through well-defined interfaces, making it easier to update, scale, and integrate new functions. As WJARR 2025 research confirms, this architecture enables reusability, scalability, and enhanced fault isolation via separation of concerns and standardized interfaces.

How does modular software improve operational efficiency?

By isolating faults and enabling targeted updates, modular systems reduce downtime, speed up maintenance, and streamline technology integration. Research on modular approaches shows that enforcing boundaries between components reduces the blast radius of failures and accelerates maintenance cycles.

Are there risks in fully embracing modularity?

Over-distribution can increase operational complexity and require better observability and orchestration tools, so a staged approach or modular monolith often makes more sense. IJIRMPS 2025 findings note that distributed boundaries shift complexity into operations, which teams must be prepared to manage before fully distributing their architecture.

Can modular software help with AI and blockchain adoption?

Yes, modular systems allow rapid integration of new technologies like AI and blockchain without costly or risky system-wide changes. Recent research confirms that organizations evolving toward these technologies benefit most when capabilities can be introduced through modular boundaries without requiring costly rework across the entire system.