TL;DR:

- AI transforms enterprise risk management by enabling real-time anomaly detection and proactive responses. It shifts the focus from periodic reviews to continuous monitoring, integrating multiple risk functions for better decision-making. Implementing lifecycle-based frameworks like NIST AI RMF and continuous oversight is essential for managing AI risks effectively.

Traditional enterprise risk management has a structural problem: most of it is backward-looking. Quarterly reviews, manual spreadsheet audits, and periodic assessments tell you what went wrong, not what is about to. AI changes that equation entirely. By detecting patterns and anomalies in real time across structured and unstructured data, AI-powered systems give risk professionals the ability to act before exposure becomes loss. This article walks through core capabilities, proven frameworks, real-world applications, and the practical barriers organizations encounter on the path to AI-augmented risk operations.

Key Takeaways

| Point | Details |

|---|---|

| AI delivers proactive risk insights | AI continuously detects weak signals and evolving threats to support timely, informed risk decisions. |

| Lifecycle frameworks are essential | Structured frameworks like NIST AI RMF enable continuous risk management and operational accountability. |

| Agentic AI risks require ongoing evaluation | Autonomous, agentic systems demand red-teaming and dynamic monitoring, not static model checks. |

| Automation accelerates governance | AI-driven validation pipelines reduce manual workload and speed up compliance reviews significantly. |

| Change management shapes adoption | Real-world integration relies on tackling operational barriers and aligning teams for effective AI augmentation. |

AI in risk management: Core capabilities and radical shifts

With the landscape set, let’s examine how AI changes the game fundamentally for risk professionals.

The most significant shift AI introduces is not automation, it is timing. Traditional risk management processes operate in cycles: data is collected, reviewed, and analyzed at scheduled intervals. AI replaces that rhythm with continuous monitoring, meaning anomalies surface in hours rather than weeks. That difference is operationally significant in environments where a single unchecked compliance gap or credit exposure can cascade quickly.

AI processes large datasets in real time, detecting anomalies and correlations that manual review would miss entirely or catch too late. A risk function that once reviewed counterparty data monthly can now maintain a live signal across hundreds of variables simultaneously. And because AI models can incorporate both structured data, like financial transactions, and unstructured data, like regulatory news or vendor communications, the coverage is considerably broader.

Beyond detection, AI is positioned to integrate across risk functions that have historically operated in silos, connecting credit risk, operational risk, compliance, and third-party risk into a shared signal layer. Organizations that have achieved this integration report faster risk response decisions and better mitigation execution overall. You can see the broader implications of this dynamic in AI transformations in finance for a sense of how these integrations are being deployed.

Key AI capabilities in enterprise risk management:

- Anomaly detection: Flags statistical outliers in transaction data, model outputs, and operational metrics in near real time

- Predictive forecasting: Uses historical data and external signals to estimate future risk exposure across business units

- Workflow automation: Reduces manual review bottlenecks in compliance checks, audit trails, and escalation routing

- Continuous reporting: Generates dynamic dashboards rather than static snapshots, enabling faster executive decision-making

- Natural language processing: Scans regulatory filings, contracts, and news feeds for early warning signals

| Dimension | Traditional approach | AI-driven approach |

|---|---|---|

| Assessment frequency | Periodic (monthly/quarterly) | Continuous, real-time |

| Data coverage | Structured, internal sources | Structured and unstructured, internal and external |

| Threat detection speed | Days to weeks | Minutes to hours |

| Human effort | High, manual review-heavy | Reduced, focused on exception handling |

| Scalability | Limited by analyst bandwidth | Scales with data volume |

| Response readiness | Reactive | Proactive and predictive |

“Organizations that have deployed AI-driven risk monitoring report measurable reductions in detection lag and a clearer line of sight into emerging threats across interconnected functions.” These outcomes are becoming the baseline expectation rather than the exception.

The challenges in finance remain real, however. Not every anomaly flagged is genuinely material, and organizations must calibrate model sensitivity to avoid alert fatigue. The value is clear, but so is the operational discipline required to realize it.

Frameworks for managing AI risk: Practical structures in action

Understanding AI’s new capabilities, risk professionals need concrete frameworks to manage them.

Deploying AI in a risk function without a governance structure is itself a significant risk. The NIST AI Risk Management Framework (AI RMF) provides one of the most actionable structures available for enterprise teams today. It organizes risk governance across four core functions: Govern, Map, Measure, and Manage. Each function covers a specific layer of accountability and operates across the full AI lifecycle rather than just the deployment moment.

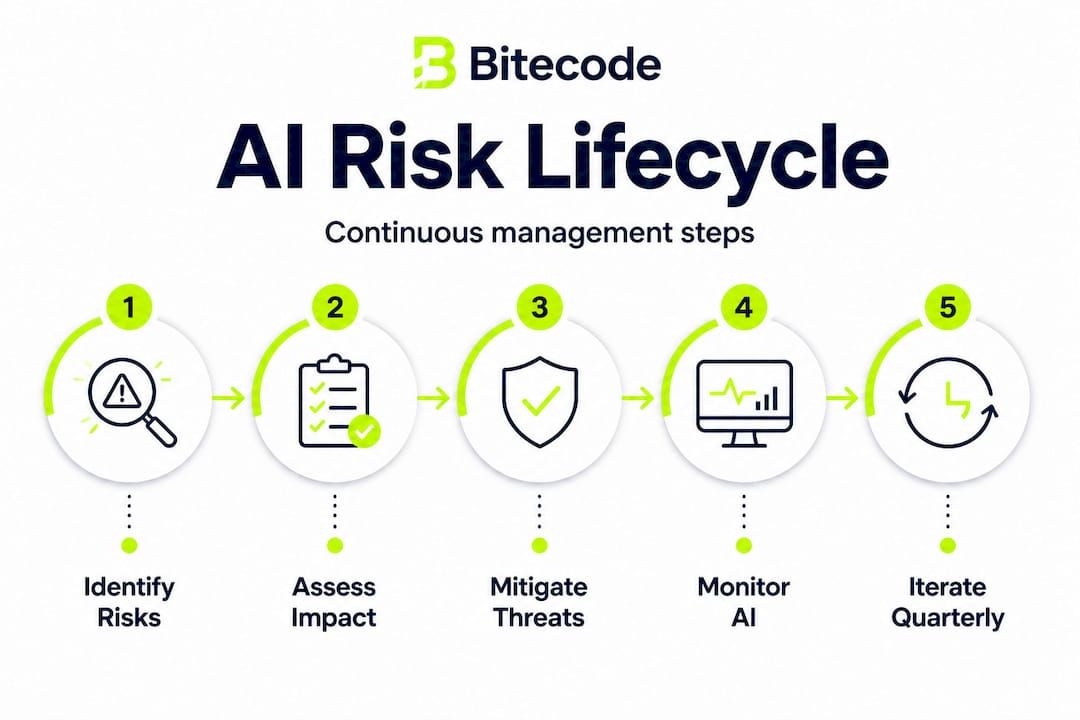

Framework implementation steps:

- Govern: Establish accountability structures, assign roles for AI risk oversight, and define acceptable risk tolerances before any model is deployed

- Map: Identify where AI systems interact with business processes, what data they depend on, and what failure modes apply to each context

- Measure: Define quantitative and qualitative indicators for model performance, fairness, and risk exposure; set thresholds that trigger review

- Manage: Implement controls at the data, model, and operational layer; document mitigation plans and escalation protocols for each identified risk

- Monitor continuously: Schedule recurring reassessment cycles tied to operational changes, model updates, and regulatory shifts

Lifecycle coverage is the key principle here. Risk identification, assessment, and mitigation must occur at every stage, not just during initial validation. Organizations that treat go-live approval as the endpoint of risk management are leaving significant exposure unaddressed.

| NIST AI RMF function | Operational control examples | Responsible role |

|---|---|---|

| Govern | Risk tolerance policy, AI ethics guidelines | Executive leadership, legal |

| Map | Data lineage mapping, process dependency analysis | Risk architects, IT |

| Measure | KPIs for model drift, fairness metrics, accuracy thresholds | Model risk team |

| Manage | Incident response plan, retraining protocols, audit logs | Operations, compliance |

| Monitor | Scheduled reviews, real-time dashboards, third-party audits | Risk management, vendors |

Teams working through AI implementation steps for the first time often find the Govern and Map functions the most time-consuming. That investment, however, pays back during the Measure and Manage phases when controls are already defined and decision-making is faster.

One area where organizations frequently underestimate risk is model hosting. Teams that depend on third-party API services for core risk models are putting risk into the vendor relationship itself. Exploring self-hosted AI models can reduce that dependency, particularly for sensitive financial data.

Pro Tip: Do not treat model validation as a single event tied to go-live. Schedule quarterly reassessments that evaluate model behavior against current data distributions, and trigger an out-of-cycle review any time business conditions shift significantly. Continuous monitoring catches what static validation cannot.

Nuances and pitfalls: Agentic AI, evolving threats, and real-world barriers

With frameworks in place, it’s critical to recognize practical and technical obstacles professionals face.

Agentic AI, meaning systems that take autonomous actions rather than simply producing outputs for human review, introduces a new category of risk that standard validation approaches were not designed for. Edge cases for agentic AI include failure modes that evolve over time, adversarial inputs that standard benchmarks do not test, and cascading behaviors that only emerge in production. The risk profile of an autonomous system that can execute decisions is fundamentally different from a model that surfaces recommendations to an analyst.

Current benchmark suites often miss these nuances entirely. Most evaluation frameworks were built for static, single-output models rather than systems that interact with environments dynamically. That gap is not theoretical. Organizations deploying agentic AI in credit decisioning, fraud response, or compliance automation are operating in territory where the risk models themselves may be generating new and unpredictable exposures.

Major challenges integrating AI into risk management:

- Regulatory ambiguity: Regulations in financial services and healthcare are evolving faster than formal AI guidance, leaving compliance teams without clear standards

- Benchmark gaps: Standard evaluation tools were not designed for autonomous, multi-step AI behavior, creating blind spots in pre-deployment testing

- Operational integration: Legacy risk infrastructure often cannot connect with modern AI APIs without significant middleware development

- Model explainability: Regulators and internal audit functions frequently require interpretable outputs, which conflicts with the opacity of some high-performance models

- Alert management: Poorly calibrated AI systems generate so many false positives that risk teams disengage, which defeats the purpose of real-time monitoring

Adoption remains uneven. Early-stage adoption barriers are particularly pronounced in regulated industries, where the cost of a governance failure is substantial and the standard of proof required before deploying new systems is correspondingly high. Many risk teams acknowledge the potential but cannot commit to full deployment without clearer regulatory frameworks in place.

Security in automation is another dimension that compounds these challenges. Automated risk systems that interact with sensitive data, execute transactions, or trigger compliance filings become high-value targets. Any AI deployment in a risk function must account for adversarial manipulation, not just model degradation.

Pro Tip: For agentic AI systems, structured red-teaming, in which a dedicated team systematically attempts to produce harmful or unexpected outputs, should be part of every deployment cycle. This is not a one-time exercise. Red-team protocols must be updated as the system evolves and as the threat environment shifts.

Application: AI in real-world risk operations and change management

Once you grasp common barriers, practical examples clarify where AI’s real value is realized.

The governance and fairness automation space provides one of the clearest illustrations. Automated fairness pipelines reduce manual validation effort and accelerate review and approval timelines in measurable ways. HSBC’s FairLens initiative is a documented case: the system automatically flagged 8.2% of loan decisions for bias remediation and reduced approval cycle time by approximately 60%, compressing a process that previously ran over 11 weeks down to 4.5 weeks. That kind of efficiency gain matters operationally, but its real significance is in the consistency of oversight it enables.

Areas where AI delivers measurable value in risk operations:

- Regulatory compliance: Automated monitoring of transaction activity against evolving rules, with dynamic reporting that replaces manual reconciliation

- Third-party risk management: Continuous scoring of vendor risk profiles using public data, contractual signals, and operational metrics rather than annual questionnaire cycles

- Data privacy: Automated classification and flagging of personal data flows, enabling near-real-time breach detection and GDPR or CCPA response

- Operational risk: Pattern recognition across incident logs, near-miss reports, and operational KPIs to surface emerging failure trends before they materialize

- Model risk: Automated drift detection and performance degradation alerts that keep model risk registers current without requiring manual recalibration cycles

Despite these gains, TPRM adoption of AI and generative AI tools remains early. A significant proportion of organizations still rely on spreadsheets and manual processes for third-party due diligence, even when leadership has articulated a goal of AI-powered transformation. The gap between strategic intent and operational reality is wide, and bridging it requires change management investment, not just technology deployment.

AI for fraud prevention is one of the most mature application areas, providing a useful reference point for risk teams evaluating deployment readiness in adjacent domains. Teams exploring automating compliance will find that many of the lessons from fraud detection, around model calibration, escalation design, and audit trail requirements, transfer directly to compliance automation.

Why continuous, lifecycle-driven risk management is essential for AI success

Here is the perspective that most frameworks leave out: the real failure mode in enterprise AI risk management is not deploying the wrong model. It is deploying the right model and then treating risk oversight as complete.

The conventional “validate before launch” mindset made sense when risk systems were static. A model trained on fixed data, deployed in a controlled environment, and monitored by a dedicated analyst team had a relatively predictable risk surface. Agentic AI does not fit that profile. It interacts with live data, learns from operational feedback, and can take consequential actions autonomously. The risk surface shifts continuously. Treating go-live as a finish line is not just outdated thinking. It is operationally dangerous.

“AI risk management works best when framed as a continuous lifecycle across Govern, Map, Measure, and Manage functions, with controls operating across data, model, and operational layers, rather than as a single validation activity before deployment.” This framing, drawn directly from AI risk management research, reflects what leading organizations are actually practicing.

The organizations that manage this well share a few characteristics. They assign ownership of AI risk to specific individuals with authority and accountability. They define measurable indicators rather than relying on qualitative assessments. And they schedule recurrent reviews tied to real-world triggers, not just calendar dates.

Static approaches leave organizations exposed in ways that are entirely preventable. A model that performed well at launch degrades silently as data distributions shift. Regulatory requirements evolve, and a system that was compliant at deployment may not remain so. Agentic systems develop behavioral patterns that only emerge over time.

The answer is not more documentation or more governance meetings. It is instrumentation: building monitoring directly into the operational infrastructure so that risk signals surface automatically and continuously. AI applications in enterprise settings increasingly demonstrate that the organizations with the best risk outcomes are those that treat AI risk management as an operational capability rather than a compliance checkbox.

Explore next-generation AI modules for enterprise risk

Risk professionals who have worked through the frameworks above face a practical next step: building the infrastructure to support them. Assembling a custom AI risk stack from scratch is slow, expensive, and puts significant development risk into an area where most organizations want operational certainty instead.

Bitecode’s AI Assistant Module provides a pre-built foundation for AI-powered workflows, enabling risk teams to deploy intelligent automation without the months of greenfield development typical of bespoke builds. For organizations managing counterparty relationships and compliance workflows, enterprise CRM solutions offer structured data management and process automation in a system designed for complex operational environments. And for risk functions that require immutable audit trails and secure transaction processing, blockchain payment systems provide an accountable, tamper-resistant layer built for enterprise scale. With up to 60% of the baseline system pre-built, your team focuses on the business-domain complexity rather than the infrastructure.

Frequently asked questions

What is the biggest advantage of AI for enterprise risk management?

The main benefit is real-time, proactive risk detection that identifies weak signals before they escalate into material threats, replacing the manual and retrospective cycles that characterize traditional risk reviews.

How do organizations govern and monitor AI risks across the lifecycle?

Frameworks like NIST AI RMF structure governance into functions such as Govern, Map, Measure, and Manage, ensuring that accountability and risk oversight extend across the full system lifecycle rather than ending at deployment.

What are common challenges integrating AI into regulated industry risk management?

The primary barriers include evolving failure modes in agentic systems, gaps in standard evaluation benchmarks, and regulatory requirements demanding interpretable outputs and robust governance controls that many current AI systems do not natively provide.

How has AI improved validation and bias remediation in risk models?

Automated fairness pipelines can reduce manual validation effort substantially, with documented cases showing approval timelines cut from over 11 weeks to 4.5 weeks and roughly 60% reductions in review cycle time.

Are most organizations using AI for third-party risk management today?

No. TPRM adoption remains early-stage, with many organizations continuing to rely on manual processes and spreadsheets even when AI-powered transformation is a stated strategic priority.