TL;DR:

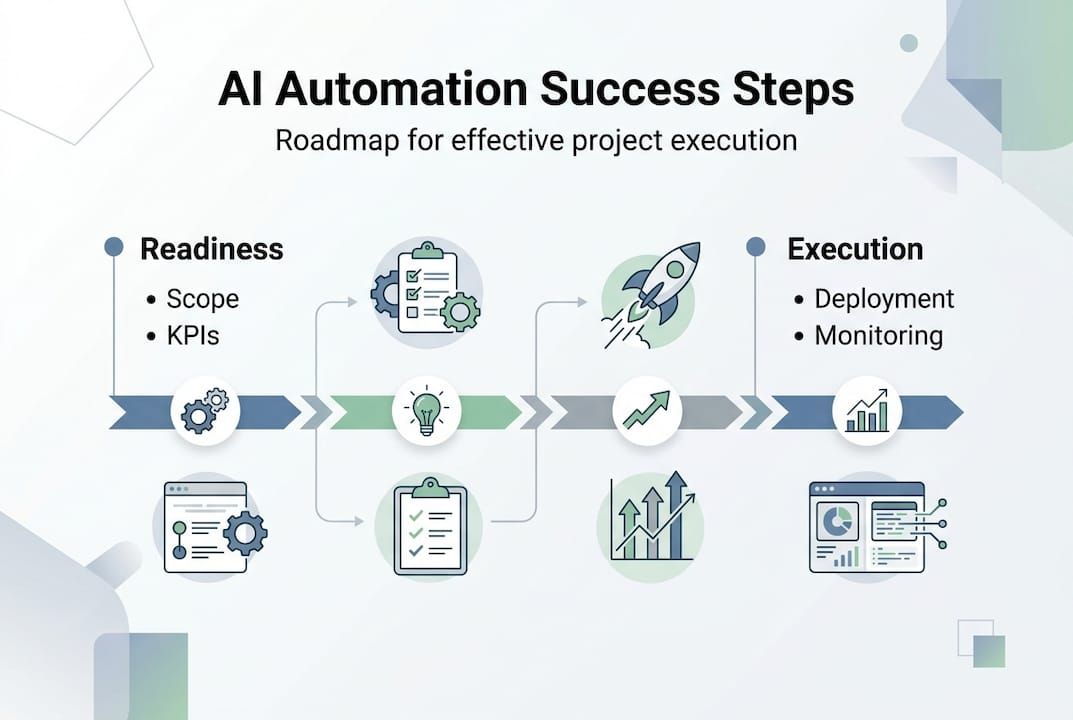

- Assess organizational readiness and focus on high-ROI, low-complexity processes before starting AI automation.

- Build aligned, multidisciplinary teams with clear roles and strong stakeholder communication for successful implementation.

- Choose scalable, secure tools with robust MLOps capabilities and implement disciplined deployment and monitoring practices.

Operational inefficiency is a real cost. Slow approval workflows, manual data entry, disconnected systems, and reactive decision-making drain enterprise resources and erode competitive advantage at a measurable rate. AI automation promises to eliminate these bottlenecks, but the difference between a successful implementation and a stalled pilot comes down to strategy. This guide walks IT leaders and enterprise decision-makers through a practical, structured blueprint for deploying AI automation rapidly and effectively, covering readiness assessment, team structure, tool selection, and execution best practices that accelerate work without accelerating chaos.

Key Takeaways

| Point | Details |

|---|---|

| Start with high-ROI pilots | Focus on low-complexity, high-return projects to demonstrate value and manage risk. |

| Build a cross-disciplinary team | Involving IT, business, and governance from the beginning ensures smoother implementation and adoption. |

| Use scalable tech and MLOps | Choose tools with fleet-native MLOps features for reliable scaling with minimal overhead. |

| Automate monitoring and iteration | Continuous monitoring and rapid iteration help sustain automation benefits and performance. |

| Culture drives lasting success | Prioritizing user empowerment and change management is key for enterprise-wide impact, not just technical gains. |

Assessing readiness and setting project scope

Having established the pressing need for action, let’s discuss how to evaluate if your organization is ready and which projects to start with.

Most failed AI automation initiatives share a common early mistake: teams jump to tooling before they have clarity on problems worth solving. The first discipline of successful implementation is structured discovery. Before evaluating any platform or vendor, organizations need an honest inventory of their processes, their data, and their people.

Start by mapping pain points systematically. Look for processes characterized by high volume, predictable rules, repetitive steps, and documented error rates. Accounts payable reconciliation, customer onboarding verification, IT service desk triage, and compliance document review are classic candidates. Understanding the different business automation types early in this phase prevents teams from misclassifying what they actually need.

Key readiness criteria to evaluate:

- Data quality and availability: AI models are only as good as the data they train on. Assess completeness, consistency, and accessibility of process data.

- Process documentation: Automation requires defined rules. Undocumented institutional knowledge is a risk factor, not a feature.

- Technical infrastructure: Evaluate API availability, cloud readiness, and integration points with existing systems.

- Cultural receptiveness: Change resistance is the most underestimated readiness gap. Gauge how process owners respond to automation proposals.

- Governance readiness: Compliance frameworks, audit requirements, and risk management protocols must be accounted for before go-live.

Once readiness is mapped, scope the initial project with discipline. Strategic automation strategies consistently show that teams who prioritize high-ROI, low-complexity pilots with clear KPIs such as 30 to 70% time reduction before scaling see dramatically better adoption and return.

KPI framework for initial pilots:

| Process metric | Baseline measurement | Target after automation |

|---|---|---|

| Process cycle time | X hours/days | Reduce by 30-70% |

| Error rate | X% manual errors | Near-zero for rule-based tasks |

| Cost per transaction | $X per unit | Reduce by 40-60% |

| Employee hours consumed | X hours/week | Redirect to higher-value work |

The right pilot is narrow enough to execute quickly, measurable enough to prove value, and representative enough to inform the broader rollout. A three-month pilot window is typically sufficient to generate meaningful data.

Pro Tip: Involve governance stakeholders, including legal, compliance, and risk, from the kickoff meeting, not after the pilot completes. Their early input shapes architecture decisions and prevents costly rework once you attempt to scale.

Building the right team and stakeholder alignment

Once you’ve scoped your initial projects, a capable and aligned team is your most important foundation.

Technology alone does not execute AI automation programs. People do. The organizational structure around an AI initiative directly determines whether it delivers measurable value or becomes a proof-of-concept that never scales. The team composition question isn’t simply “who builds it.” It’s “who owns it, who enables it, and who sustains it.”

A high-functioning implementation team spans multiple disciplines:

- IT architects and engineers: Own infrastructure, integration, security, and API connectivity. They define the technical feasibility of each candidate process.

- Business process owners: Bring domain expertise and process authority. They understand exceptions, edge cases, and what “good” looks like in practice.

- Data scientists or ML engineers: Design, train, validate, and maintain the models or automation logic that power each solution.

- Change management leads: Manage communication, training, and adoption with end users. Without this role, even well-built solutions fail in practice.

- Project sponsor (C-level): Provides executive air cover, budget authority, and removes organizational blockers that lower-level teams cannot address.

“Governance should be integrated from day one to manage implementation risks.” This means assigning clear accountability for risk at the project inception, not retroactively adding it once issues arise.

Adopting enterprise software best practices means treating stakeholder alignment as an engineering artifact, not a soft activity. Decision logs, responsibility matrices (RACI), and formal sign-off checkpoints are not overhead. They are risk management infrastructure.

One of the clearest patterns separating successful programs from stalled ones is the presence of a dedicated project champion. This person sits at the intersection of business and technology, translating executive strategy into sprint-level priorities and surfacing adoption concerns before they become blockers. The champion is not the project manager. They are the internal advocate, the problem-solver, and the connective tissue between workstreams.

Feedback loops with end users are equally non-negotiable. Requirements that emerge only from process owners miss the ground-level complexity that frontline users experience daily. Regular structured touchpoints, such as bi-weekly user feedback sessions during the pilot, generate the input needed to iterate effectively.

Pro Tip: Appoint your project champion before you finalize the project scope. Their participation in scoping ensures that the technical requirements actually reflect organizational realities, not just theoretical process maps.

Finally, communication cadence matters. Define who receives what information, at what frequency, and through which channel. Executive sponsors need milestone summaries. Process owners need weekly status on KPI movement. End users need clear, jargon-free communication about how their workflows will change and why.

Choosing tools, architecture, and MLOps for scalability

With your team in place, selecting the right tools and establishing efficient operational practices are next.

Tool selection is one of the most consequential decisions in any AI automation program. Choosing the wrong platform early creates technical debt that compounds as you scale. Choosing the right one creates a foundation that accelerates every subsequent initiative.

Evaluation criteria for enterprise AI automation platforms:

- Integration compatibility: Does the platform connect natively with your existing ERP, CRM, and data infrastructure through well-documented APIs? Integration friction is the most common deployment bottleneck.

- Security and compliance posture: Evaluate SOC 2 certification, data residency options, role-based access controls, and audit logging capabilities. Regulated industries have non-negotiable requirements here.

- Scalability architecture: Assess whether the platform is designed for fleet-scale operations or optimized for single-model use cases. These are fundamentally different products.

- Vendor support and roadmap transparency: Enterprise implementations require responsive, knowledgeable support. Evaluate escalation paths, SLA commitments, and the vendor’s track record on feature delivery.

- MLOps capabilities: Sustainable AI at scale requires automated deployment, monitoring, and retraining pipelines. Manual model management becomes unmanageable quickly.

On architecture, the choice between modular and monolithic approaches has lasting implications. Modular, API-first architectures allow teams to compose automation capabilities from interchangeable components, replace underperforming elements without rebuilding the whole system, and integrate new AI capabilities as they mature. Understanding end-to-end automation architecture is essential before committing to any single vendor’s ecosystem.

The MLOps dimension is where many enterprises underestimate complexity. As the number of production AI models grows from one or two pilots to dozens of operational workflows, enterprise-scale demands fleet-native MLOps for thousands of models to automate deployment, monitoring, and retraining and avoid a headcount explosion in model maintenance. Manual MLOps practices that work at pilot scale break down completely at fleet scale. And for teams managing enterprise automation processes across multiple business functions, fleet-native tooling is not optional.

Platform comparison for enterprise AI automation:

| Platform capability | Best suited for | Scale limitation | MLOps support |

|---|---|---|---|

| Low-code workflow automation | Business process teams | Single-department pilots | Limited native support |

| API-first ML platforms | Engineering-led teams | High (with proper architecture) | Strong pipeline tooling |

| Hyperscaler AI services | Cloud-native organizations | Very high | Native integrations |

| Custom modular builds | Complex, bespoke requirements | Unlimited with planning | Configurable |

Recommended tool selection process:

- Define your non-negotiable technical requirements before vendor conversations.

- Shortlist three to five platforms based on integration compatibility and security posture.

- Run a structured proof-of-concept (PoC) on a representative process, not a toy dataset.

- Evaluate PoC results against your KPI framework, not vendor benchmark claims.

- Assess total cost of ownership including licensing, integration labor, and ongoing maintenance.

Execution: deployment, monitoring, and rapid iteration

Once you’ve chosen your tools and architecture, it’s time to deploy and manage your first pilots.

The gap between a validated tool selection and a live production deployment is where most implementation risk concentrates. Execution discipline determines whether a promising pilot delivers sustained value or regresses into a maintenance burden.

Structured deployment process:

- Configure in a staging environment first. Replicate production data conditions as closely as possible. Surprises in staging are recoverable. Surprises in production are expensive.

- Run parallel processing during initial go-live. Let the automated system process transactions alongside the existing manual process for two to four weeks. This validates accuracy without business risk.

- Instrument monitoring before launch, not after. Define which metrics you will track from day one: model accuracy, processing latency, exception rates, and user adoption rates.

- Establish a feedback intake channel for end users. A simple ticket category or a dedicated Slack channel collects the ground-level observations that monitoring dashboards miss.

- Set a structured iteration cadence. Weekly review of performance data, bi-weekly model evaluation, and monthly stakeholder reporting creates a rhythm that drives continuous improvement.

Monitoring is not a passive activity. Well-executed AI pilots consistently show that pilots deliver 30-70% time reduction in process cycle time when monitoring is proactive and iteration is fast. The teams that achieve this do not wait for users to report problems. They detect anomalies in model output distributions before errors reach business impact.

For AI applications in finance, monitoring requirements are even more stringent. Drift in financial classification models or anomaly detection systems can generate regulatory exposure if not caught quickly. Build retraining triggers into your MLOps pipeline based on performance thresholds, not calendar schedules.

Stat callout: According to research on AI automation program outcomes, well-structured pilots can deliver 30 to 70% reduction in process cycle time within the first quarter of deployment. The variance in that range is largely explained by the quality of monitoring and iteration practices, not the sophistication of the underlying model.

Pro Tip: Automate your monitoring alerts so that model performance degradation triggers notifications to the engineering team before users experience errors. Reactive monitoring is too slow for production AI systems operating at scale.

Compliance tracking deserves dedicated attention throughout execution. Maintain a living audit log of model decisions, retraining events, and any manual overrides. This documentation is essential for regulated industries and increasingly expected by enterprise risk and compliance functions regardless of industry.

Why effective scaling is about people, not just models

There is a persistent tendency in enterprise AI programs to treat scaling as fundamentally a technology problem. More compute, better models, more automated pipelines. The assumption is that once the technical foundation is solid, adoption and value follow naturally. That assumption is wrong, and it is expensive.

The most sophisticated AI automation architecture will fail to deliver ROI if the people operating within automated workflows do not understand, trust, or engage with the system. Cultural inertia is a genuine risk factor, not a soft concern to address in a change management sidebar. Organizations that treat adoption as an afterthought consistently see usage rates plateau far below the designed automation coverage, which means they are paying full implementation costs for fractional value.

The organizations that scale effectively invest in software best practices that center user empowerment alongside technical delivery. They explain what the model does and why. They create visible feedback mechanisms so users feel heard. They demonstrate early wins transparently rather than letting results speak for themselves through dashboards nobody reads.

Lasting automation value comes from enabling teams to operate at a higher level, not just deploying models that remove tasks from their plates. The distinction matters. One approach builds capability and engagement. The other builds dependency and resentment. Treating change management as a technical deliverable, with assigned owners, defined milestones, and measurable adoption KPIs, is the structural decision that separates programs that scale from programs that stall.

Accelerate your AI automation journey with Bitecode

Ready to put these strategies to work? Here’s how Bitecode can help accelerate your AI automation goals.

Bitecode’s modular platform is built specifically for organizations that cannot afford the 18-month custom development cycle but still need tailored, enterprise-grade automation. With up to 60% of baseline system components pre-built, teams can deploy working automation solutions in weeks rather than quarters.

The enterprise AI solutions available through Bitecode include AI assistants, intelligent workflow automation, and production-ready integration modules designed for complex enterprise environments. For sales and service operations, the custom CRM automation module delivers measurable efficiency gains without greenfield development overhead. If your organization is ready to move from strategy to deployment, Bitecode’s team provides the technical architecture and implementation expertise to close that gap quickly and reliably.

Frequently asked questions

What is the first step in AI automation implementation?

Start by assessing readiness, pinpointing high-ROI, low-complexity processes, and setting measurable KPIs before piloting any automation solution.

How do you choose the right AI automation tools for enterprise needs?

Select platforms that are scalable, secure, compatible with existing systems, and support fleet-native MLOps best practices for managing model deployment and retraining at scale.

What KPIs measure the success of AI automation?

Time reduction, cost savings, error rates, and user adoption are the primary metrics; clear pilot KPIs such as a 30-70% cycle time reduction provide a concrete performance baseline.

Why is governance crucial in AI automation projects?

Governance manages implementation risks and ensures continuous alignment between technical delivery and broader business objectives from the first day of the project.

How quickly can enterprises see results from AI automation?

High-ROI pilots deliver 30-70% reduction in process time within months when monitoring and iteration practices are executed with discipline from go-live.