Traditional transaction monitoring systems struggle with mounting regulatory complexity and high false positive rates that overwhelm compliance teams. Financial institutions face a critical challenge: detecting sophisticated fraud while managing operational costs and meeting stringent oversight requirements. AI implementations in SAP ERP increased risk detection by 37% and fraud detection by 52%, yet integrating these technologies demands careful navigation of explainability and regulatory frameworks. This guide explores how AI transforms transaction monitoring, addresses compliance challenges, and delivers actionable strategies for risk management professionals seeking to enhance fraud prevention capabilities.

Key Takeaways

| Point | Details |

|---|---|

| Performance gains | AI implementations in SAP ERP increased risk detection by 37 percent, fraud detection by 52 percent, and reduced false positives by 42 percent. |

| Explainability and oversight | Regulators require explainability and human oversight for AI models. |

| Hybrid models balance transparency | Combining AI with human review preserves transparency while maintaining automation efficiency. |

| Incremental deployment approach | Start with a single product line to validate performance and gradually scale to the enterprise. |

Understanding the role of AI in transaction monitoring

AI leverages machine learning algorithms to analyze transaction patterns at unprecedented scale and speed. Unlike rule-based systems that flag transactions matching predefined criteria, AI models identify subtle anomalies and correlations across millions of data points simultaneously. This capability transforms how financial institutions detect money laundering, fraud, and suspicious activity that evades traditional monitoring approaches.

The technology detects fraud signals that human analysts and static rules routinely miss. Machine learning models recognize complex behavioral patterns, unusual transaction sequences, and network relationships that indicate criminal activity. AI implementations in SAP ERP increased risk detection by 37% and fraud detection by 52%, reducing false positives by 42%. This dramatic improvement directly addresses the resource drain caused by investigating thousands of false alerts that plague compliance teams.

AI systems reduce false positives by learning from historical data and analyst feedback. Traditional systems generate alerts based on rigid thresholds, creating massive queues of legitimate transactions requiring manual review. Machine learning models continuously refine their understanding of normal versus suspicious behavior, focusing investigative resources on genuine threats. This efficiency gain allows compliance teams to process higher transaction volumes without proportional staff increases.

The technology supports continuous learning and adapts to evolving fraud tactics. Criminal organizations constantly develop new schemes to circumvent detection systems. AI models retrain on fresh data, identifying emerging patterns and adjusting detection parameters automatically. This adaptive capability maintains effectiveness against threats that would render static rule sets obsolete within months.

Key advantages of AI in transaction monitoring include:

- Pattern recognition across multiple data dimensions simultaneously

- Real-time risk scoring that prioritizes high-threat transactions

- Network analysis revealing hidden relationships between accounts

- Behavioral profiling that establishes customer-specific baselines

- Automated model updates responding to new fraud typologies

Pro Tip: Integrate AI incrementally by starting with a single product line or transaction type. This approach allows you to validate model performance, tune detection thresholds, and build operational workflows before expanding to enterprise-wide deployment. Gradual implementation reduces risk while demonstrating value to stakeholders.

The AI assistant module enables compliance teams to query transaction data using natural language, accelerating investigation workflows and reducing the technical barrier to accessing AI insights.

Navigating regulatory and explainability challenges with AI

Regulatory agencies demand transparent AI decision-making processes to prevent black-box risk in financial compliance. Regulatory agencies require explainability and human oversight over AI models per SR 11-7 and the EU AI Act. Supervisors need to understand why specific transactions triggered alerts, how models weight different risk factors, and what data drives automated decisions. This transparency requirement creates significant challenges for complex neural networks that operate as opaque systems.

Explainable AI techniques like SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations) help interpret model outputs for compliance purposes. These methods break down individual predictions, showing which transaction features contributed most to risk scores. SHAP values quantify each variable’s impact on a specific alert, while LIME generates simplified explanations by testing how prediction changes when input data varies. Both approaches provide the audit trail regulators expect.

Human review remains essential to mitigate model bias, validate edge cases, and maintain institutional accountability. AI systems learn from historical data that may contain embedded biases or reflect outdated threat landscapes. Compliance officers provide critical judgment on cultural context, legitimate business patterns, and emerging fraud schemes that models haven’t encountered. This human oversight catches false positives that could damage customer relationships and identifies sophisticated fraud requiring investigative expertise.

Hybrid human-AI workflow models ensure balanced compliance by combining automated efficiency with expert analysis. Best practice architectures route low-risk transactions through automated clearance while escalating high-risk cases to analysts. Mid-range alerts receive AI-generated investigation guidance that highlights key risk factors and suggests verification steps. This tiered approach optimizes both speed and accuracy.

Implementing effective hybrid workflows requires:

- Define clear escalation thresholds based on risk scores and transaction characteristics

- Establish feedback loops where analyst decisions retrain AI models

- Create standardized investigation protocols that incorporate AI insights

- Maintain detailed documentation of human overrides and rationale

- Conduct regular model performance reviews comparing AI and human accuracy

Pro Tip: Implement ongoing model risk management frameworks aligned with SR 11-7 guidance. This includes quarterly validation testing, annual comprehensive reviews, and continuous monitoring of model performance metrics. Document all validation activities, maintain version control for model updates, and establish clear governance over AI system changes.

“The integration of explainable AI with human expertise creates a compliance framework that satisfies regulatory requirements while delivering superior fraud detection outcomes. Financial institutions must view AI as an augmentation tool rather than a replacement for skilled analysts.”

The EU GDPR AI compliance framework provides additional guidance on data privacy requirements when implementing AI systems that process personal financial information.

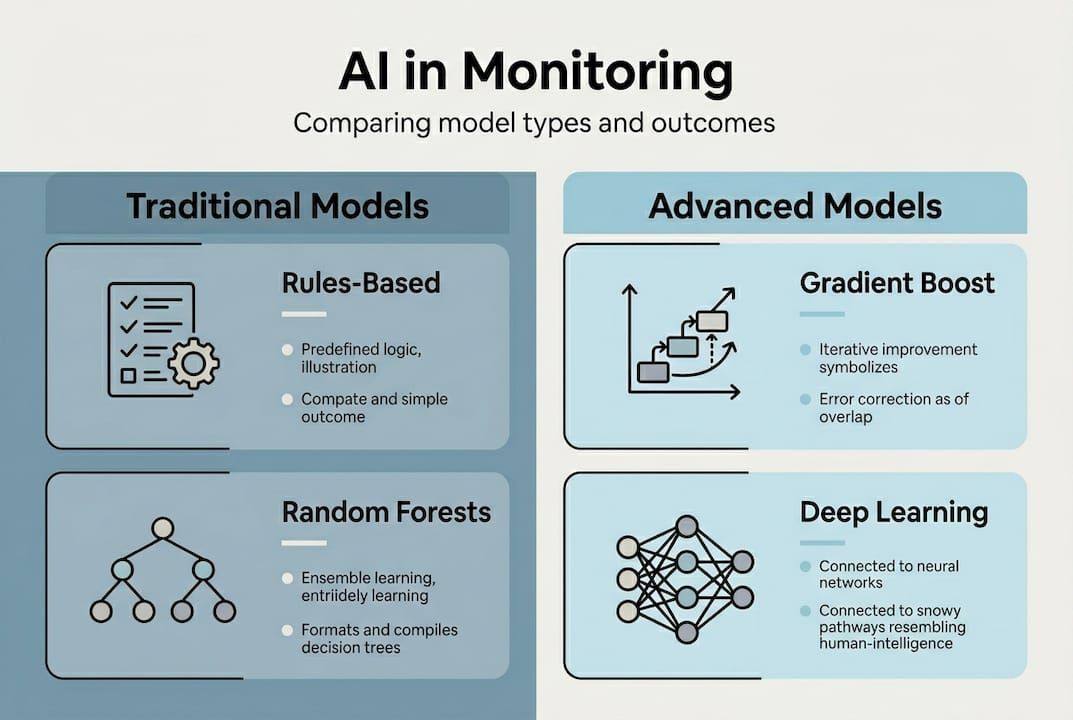

Comparing AI approaches in transaction monitoring

Different AI models each offer distinct trade-offs between accuracy, explainability, and computational cost in compliance systems. Understanding these differences helps institutions select technologies matching their risk appetite, regulatory environment, and operational capabilities.

| Model Type | Detection Accuracy | False Positive Rate | Explainability | Deployment Complexity | Best Use Case |

|---|---|---|---|---|---|

| Rule-based systems | Moderate | High | Excellent | Low | Simple fraud patterns, regulatory baselines |

| Traditional machine learning | High | Moderate | Good | Moderate | Balanced accuracy and transparency needs |

| Deep learning | Very High | Low | Limited | High | Large institutions with complex fraud schemes |

| Hybrid ensemble | Very High | Low | Moderate | High | Organizations requiring both accuracy and explainability |

Rule-based systems offer maximum transparency but limited adaptability. These traditional approaches flag transactions exceeding predefined thresholds or matching known fraud patterns. Compliance officers easily explain alerts to regulators and customers. However, rules require constant manual updates and generate excessive false positives as legitimate business practices evolve.

Traditional machine learning models like random forests and gradient boosting balance performance with interpretability. These algorithms identify complex patterns while providing feature importance rankings that explain predictions. They require less computational power than deep learning and perform well with moderate data volumes. This makes them ideal for mid-sized institutions or organizations beginning AI adoption.

Deep learning models achieve superior detection rates but operate as black boxes requiring specialized explainability techniques. Neural networks process vast data sets to recognize subtle fraud indicators across multiple transaction dimensions. They excel at detecting sophisticated schemes but demand significant computational resources and large training data sets. Regulatory scrutiny of these models requires robust XAI implementation.

Hybrid ensemble approaches combine multiple model types to optimize both accuracy and transparency. These systems use interpretable models for initial screening and deep learning for complex cases requiring advanced analysis. The architecture provides explainable decisions for most transactions while leveraging neural network power for edge cases.

Key considerations for model selection:

- Institution size and transaction volume determine computational requirements

- Regulatory environment influences explainability needs

- Data quality and quantity affect model training effectiveness

- Internal technical expertise impacts deployment and maintenance feasibility

- Risk tolerance guides the balance between false positives and missed fraud

The hosting private AI models approach allows institutions to maintain complete control over sensitive financial data while leveraging advanced AI capabilities.

Implementing AI-powered transaction monitoring: best practices and pitfalls

Successful AI integration requires systematic planning and cross-functional collaboration. Follow these implementation steps:

- Define clear project scope identifying specific fraud types and transaction categories to address

- Assess current data quality and establish governance processes for ongoing data management

- Select model architectures matching your regulatory requirements and technical capabilities

- Develop validation frameworks testing model performance across diverse scenarios

- Create integration plans connecting AI systems with existing compliance workflows

- Establish continuous monitoring processes tracking model accuracy and drift

- Build feedback mechanisms where analyst decisions improve model training

Data quality determines AI system effectiveness more than algorithm sophistication. Models trained on incomplete, inconsistent, or biased data produce unreliable predictions regardless of technical complexity. Invest in data cleansing, standardization, and enrichment before model development. Establish ongoing data governance ensuring consistent quality as transaction volumes grow.

Continuous monitoring and recalibration address changing fraud patterns that degrade model performance. Criminal organizations adapt tactics when detection systems identify their schemes. Schedule quarterly model reviews comparing current performance against baseline metrics. Retrain models when accuracy drops or false positive rates increase. Document all recalibration activities for regulatory validation.

Cross-functional collaboration between compliance, IT, and legal teams ensures AI systems meet operational and regulatory requirements. Compliance officers define detection priorities and investigation workflows. IT teams architect system integration and data pipelines. Legal counsel validates regulatory alignment and documentation practices. Regular coordination prevents siloed development that creates operational friction.

Common pitfalls to avoid:

- Overreliance on AI without maintaining human oversight and judgment

- Ignoring regulatory guidelines on model explainability and validation

- Underestimating the false positive management required during initial deployment

- Failing to establish clear escalation protocols for high-risk alerts

- Neglecting change management and training for compliance staff using AI tools

- Deploying models without comprehensive testing across diverse transaction scenarios

Pro Tip: Start pilot projects with measurable goals before full-scale rollouts. Select a contained use case like credit card fraud or wire transfer monitoring. Define success metrics including detection rate improvements, false positive reduction, and investigation time savings. Run the pilot for 90-180 days, gathering quantitative performance data and qualitative user feedback. Use pilot results to refine models and workflows before enterprise expansion.

SAP ERP AI usage reduced fraud by 65% across 8.7 billion transactions, showcasing the transformative impact possible with proper implementation. These results required careful planning, data preparation, and iterative refinement over multiple deployment phases.

The process automation with AI module streamlines investigation workflows by automatically gathering supporting documentation, generating case summaries, and routing alerts to appropriate analysts based on expertise and workload.

Enhance your transaction monitoring with Bitecode AI solutions

Financial institutions face mounting pressure to detect fraud faster while managing compliance costs. Bitecode delivers AI-driven solutions specifically designed for compliance and risk management teams navigating these challenges. Our platform combines advanced machine learning with practical explainability, enabling you to implement sophisticated transaction monitoring without extensive development cycles.

Discover our AI assistant module that empowers compliance officers to query transaction data using natural language, accelerating investigations and reducing technical barriers. Automate transaction monitoring workflows to detect fraud patterns in real-time while maintaining the human oversight regulators require. Integrate custom CRM solutions tailored to compliance needs, connecting AI insights with case management and regulatory reporting systems. Our modular approach starts projects with up to 60% of baseline systems pre-built, delivering rapid deployment and measurable fraud reduction.

Frequently asked questions

What are the main benefits of using AI in transaction monitoring?

AI increases detection rates by identifying complex fraud patterns that evade traditional rule-based systems. It reduces false positives by learning from historical data and analyst feedback, focusing investigative resources on genuine threats. The technology improves operational efficiency by processing higher transaction volumes without proportional staff increases, while adapting continuously to emerging fraud tactics.

How does explainable AI help meet regulatory requirements?

Explainable AI interprets model outputs for human understanding, providing the transparency regulators demand for compliance systems. Explainability techniques like SHAP and LIME are required by regulatory frameworks to demonstrate how AI systems reach decisions. These methods generate audit trails showing which transaction features contributed to risk scores, enabling compliance officers to validate alerts and respond to regulatory inquiries with documented rationale.

What are key challenges when deploying AI for transaction monitoring?

Key challenges include achieving model explainability while maintaining detection accuracy, meeting evolving regulatory demands for transparency and validation, and balancing automated efficiency with expert human judgment. Hybrid human-AI approaches are essential due to regulatory and explainability challenges. Organizations must also address data quality issues, manage false positives during initial deployment, and build cross-functional collaboration between compliance, IT, and legal teams.

How can financial institutions get started with AI-powered transaction monitoring?

Start with pilot programs targeting specific fraud types or transaction categories to validate model performance and refine workflows before enterprise expansion. Invest in data quality improvement and governance processes, as clean, consistent data determines AI effectiveness more than algorithm sophistication. Incremental AI integration with human oversight improves detection while managing risks. Combine AI insights with compliance expertise, establish clear escalation protocols, and maintain detailed documentation for regulatory validation throughout implementation.