Why Handover Is More Than Code Delivery

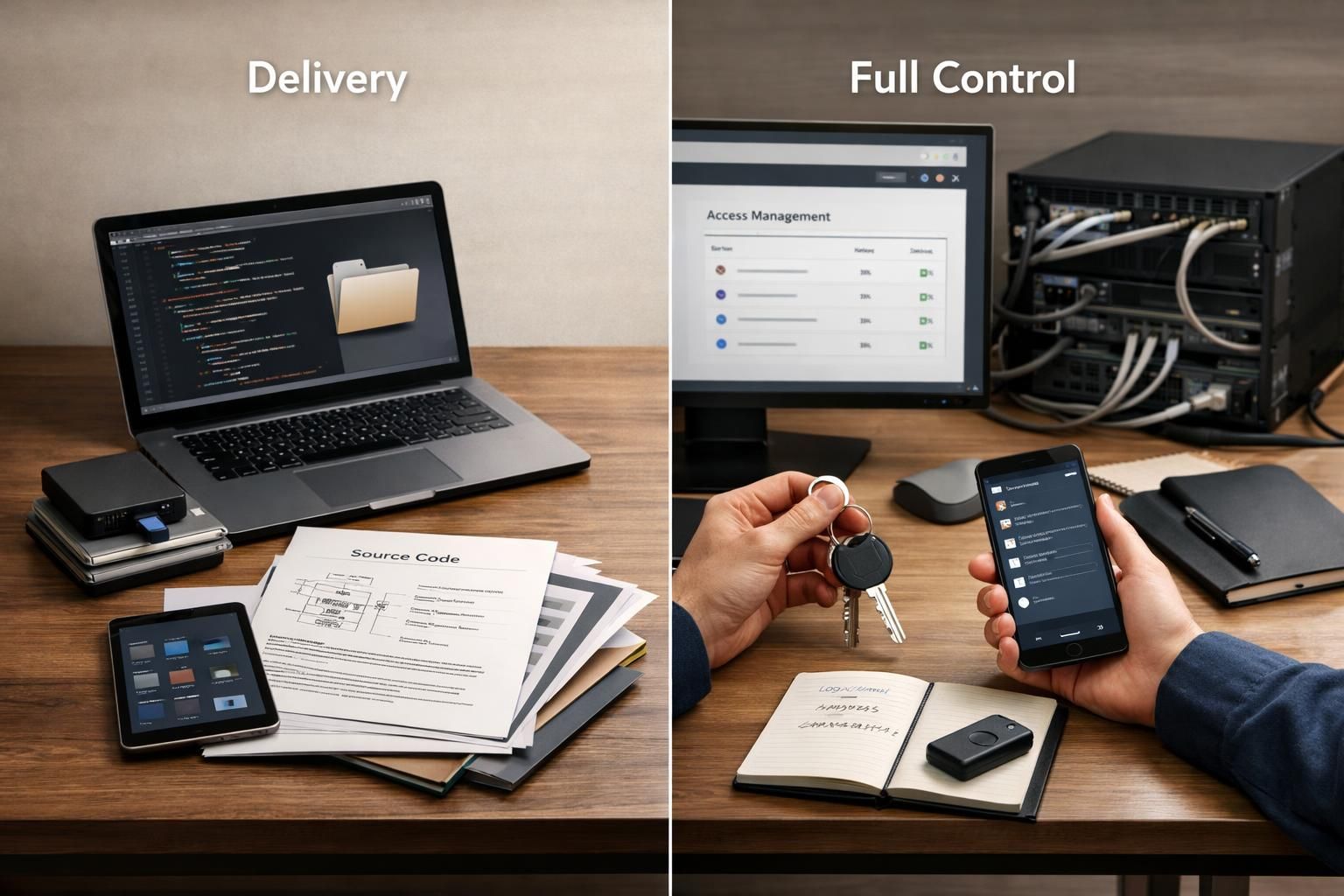

Most software projects end with a handover. The agency delivers something, the client accepts it, and final payment follows. The problem is that "delivered" and "actually handed over" are not the same thing.

A client who receives repository access may still lack the ability to deploy the application independently. A client who receives documentation may still depend on the original team for secrets, credentials, or operational knowledge that was never written down. A client who receives "the code" may find that critical infrastructure—CI/CD pipelines, cloud accounts, monitoring, domains, certificates—remains under vendor control.

The real test of a successful handover is not whether you received files. It is whether another team can take over without calling the original vendor for help.

Delivered vs Actually Handed Over

Before final payment, verify that you have not just received assets, but actually control them. The following table shows the difference:

| Category | Delivered | Actually Handed Over |

|---|---|---|

| Source code | Zip file or read access to repo | Full repository with history, issues, PRs, and admin rights transferred to your org |

| Infrastructure | Documented architecture diagram | Cloud account ownership, admin access, and ability to provision or destroy resources |

| CI/CD | Pipeline configuration files in repo | Pipeline ownership, secrets access, and ability to run deployments without vendor credentials |

| Documentation | README and API docs | Architecture decision records, runbooks, environment setup guides, and data model docs |

| Security | Statement that security was considered | Documented auth/authz approach, secrets rotation process, and audit-relevant logging |

| Ownership | Verbal confirmation of IP transfer | Written assignment, third-party account ownership, and credential rotation after handover |

If your handover falls into the "Delivered" column without reaching the "Actually Handed Over" column, you are accepting risk that will cost money later.

What Your Software Agency Must Hand Over Before Final Payment

This is the core question buyers should answer before signing off. A complete handover includes the following categories:

Repository and Code Ownership

You should receive full ownership of the repository, not just read access. On platforms like GitHub, this means a proper repository transfer that preserves commit history, issues, pull requests, wikis, releases, webhooks, and associated metadata. A code export or zip file loses this context.

Verify that your organization owns the repository at the platform level. If the repo remains in the vendor's GitHub organization with your team added as collaborators, you do not have full control.

Infrastructure and Cloud Access

Repository access means nothing if the application runs on infrastructure you do not control. Before final payment, verify:

- Cloud account ownership (AWS, GCP, Azure, or equivalent) is in your organization's name

- Admin access to all production and staging environments

- Ability to provision, modify, or destroy resources without vendor involvement

- Domain registration and DNS management under your control

- SSL certificates and their renewal process documented and accessible

If your application runs in the vendor's cloud account, you have a hosting relationship, not ownership.

CI/CD and Deployment Control

A common failure mode: the client receives code but the deployment pipeline still requires vendor credentials, secrets, or manual intervention. According to GitHub's documentation on secrets, pipeline secrets are scoped to repositories or organizations. If secrets remain in the vendor's control, your ability to deploy independently is compromised.

Verify:

- CI/CD pipeline definitions are in your repository

- Pipeline secrets have been migrated to your organization's secret management

- You can trigger a deployment to production without vendor assistance

- You can modify pipeline configuration without vendor approval

Documentation for Future Teams

Documentation is not a courtesy; it is a transfer of knowledge that future teams will otherwise have to rediscover at your expense. A complete handover includes:

Product documentation: Feature specifications, user flows, and business rules that explain what the system does and why.

Technical documentation: Architecture overview, data model, API documentation, integration documentation, and environment configuration guides.

Architecture Decision Records: ADRs capture the options considered, the choice made, and the rationale behind significant technical decisions. Without them, future teams will re-litigate decisions about authentication approaches, multi-tenancy models, caching strategies, or data retention policies without understanding why the original choices were made. Both AWS and Google Cloud recommend ADRs as standard practice for reducing repeated decision-making and improving handover quality.

Runbooks: Operational procedures for common tasks, deployment steps, rollback procedures, and incident response guidance.

Security Handover

A statement that "security was considered" is not a handover. You need verifiable evidence of how security is implemented and documented. Based on OWASP Application Security Verification Standard principles, verify:

Authentication: How users prove their identity. What mechanisms exist (passwords, MFA, SSO)? Where is this documented? Who controls the identity provider?

Authorization: How access permissions are enforced. According to OWASP guidance, authorization should be enforced on every request, not assumed from prior checks. Ask to see where this is implemented.

Session management: How sessions are created, maintained, and terminated. Session lifecycle documentation should exist and be accessible to your team.

Secrets management: How credentials, API keys, and sensitive configuration are stored and rotated. Following OWASP secrets management guidance, secrets should never be committed to repositories, and rotation procedures should be documented.

Audit-relevant logging: What events are logged for security and compliance purposes? Login attempts, failed authentications, privilege changes, and high-value transactions should be captured in logs your team can access.

Dependency and License Inventory

Your application depends on third-party libraries, frameworks, and services. Before final payment, you should receive:

- A complete list of dependencies with version numbers

- License information for each dependency, ideally using standardized SPDX identifiers

- Known security advisories or vulnerabilities in current dependency versions

- Documentation of upgrade paths for major dependencies

This inventory is increasingly treated as a risk-management input. CISA's guidance on software bills of materials reflects a broader industry shift toward treating software composition as a governance concern, not just an engineering artifact.

Legal Ownership and IP Transfer

Verify in writing—not verbally—that intellectual property rights have been transferred. This typically means:

- Written assignment of copyright in the custom code

- Clear statement of your rights to use, modify, and distribute the software

- Clarification of any components that remain under vendor or third-party license

Additionally, verify ownership of third-party accounts that are critical to operations:

- Domain registrations

- SSL certificate accounts

- App store accounts (iOS, Android, relevant marketplaces)

- Analytics and error tracking accounts

- Email service providers

- Payment processor accounts

If these accounts remain in the vendor's name, you have operational dependency even if you "own" the code.

The Reproducibility Test

The most concrete acceptance test for a handover is this: assign a developer who did not work on the project. Give them only the handover package and access credentials. Ask them to:

- Set up a local development environment from the provided documentation

- Run the test suite successfully

- Deploy the application to a fresh environment (not the existing production)

- Complete one key business flow end-to-end

If this succeeds without calling the original vendor, the handover is real. If it fails, you are still dependent.

This approach aligns with production readiness thinking from Google's SRE practices, which emphasize verifying operational standards before support transitions.

Red Flags That a Handover Is Incomplete

Watch for these warning signs before releasing final payment:

"It works on my machine": If the vendor can run the application but your team cannot reproduce the environment, critical configuration or dependencies are missing.

Hidden configuration: Environment variables, feature flags, or operational settings that are not documented or accessible. Following Twelve-Factor methodology, configuration should be strictly separated from code and documented.

No dependency inventory: If the vendor cannot produce a list of dependencies and their licenses, you are accepting unknown legal and security exposure.

Architecture documentation is "in progress": Documentation that will be delivered "later" is often documentation that will never arrive. Require it before sign-off.

Vendor-controlled CI/CD: If deployments require the vendor to push a button, approve a pipeline, or provide credentials, you do not have operational independence.

Ambiguous IP ownership: Verbal assurances without written agreements leave you exposed to disputes about modification rights, resale rights, or code reuse.

Third-party accounts in vendor name: Domains, certificates, app store listings, or monitoring accounts registered under the vendor create ongoing dependency.

No known-issues register: Every software project has known limitations, workarounds, or deferred fixes. If the vendor claims there are none, they are either hiding information or have not been tracking issues properly.

Sign-Off Blockers

These conditions should prevent acceptance and delay final payment:

- Fresh-environment deployment fails without contractor involvement

- Critical architecture documentation does not exist

- Security-critical gaps are identified without remediation plans

- IP and usage rights are ambiguous or undocumented

- Dependency and license inventory is missing

- Repository remains in vendor organization without transfer

- Cloud accounts or infrastructure remain vendor-owned

- Secrets rotation cannot be performed without vendor assistance

Practical Sign-Off Checklist

Use this checklist before releasing final payment:

Repository and Code

- Repository ownership transferred to your organization

- Full commit history preserved

- All branches, tags, and releases accessible

- No vendor credentials or accounts required for repository access

Infrastructure and Environments

- Cloud account in your organization's name

- Admin access to all environments (production, staging, development)

- Domain and DNS management under your control

- SSL certificates accessible and renewal documented

CI/CD and Deployment

- Pipeline definitions in your repository

- Secrets migrated to your secret management

- Independent deployment verified without vendor involvement

- Rollback procedures documented and tested

Documentation

- Architecture overview with diagrams

- Architecture decision records for significant choices

- API documentation complete and current

- Data model documentation

- Environment setup guide verified by non-project developer

- Runbooks for common operational tasks

Security

- Authentication implementation documented

- Authorization model documented

- Session management documented

- Secrets management process documented

- Audit logging in place and accessible

- Security-relevant configuration documented

Dependencies and Compliance

- Dependency inventory with versions

- License inventory for all dependencies

- Known vulnerabilities documented with remediation status

- Third-party service documentation and account ownership

Legal and Ownership

- Written IP assignment executed

- Third-party accounts transferred to your organization

- Credentials rotated after handover

- Vendor access revoked from production systems

Why This Matters for Vendor Selection

If you are early in your search for a software house, consider how different vendors approach handover. Some agencies treat handover as an afterthought—a final formality before closing the project. Others treat it as part of the product quality standard, with handover readiness built into the development process from the beginning.

The difference matters because retrofitting handover documentation and control transfer at the end of a project is expensive and often incomplete. When handover is planned from day one, the system is built to be understandable, deployable, and maintainable by teams other than the original developers.

Companies that have experienced the cost of dependency on external platforms understand why ownership and control matter. The goal is not just to receive a working application, but to receive an application you can actually operate, modify, and evolve without ongoing vendor dependency.

Systems built on a modular foundation with code in your hands make handover a transfer of control rather than a transition from dependence to uncertainty.

Summary

A complete handover is not a ceremony. It is a transfer of control that enables your organization to operate, modify, and evolve the software without ongoing vendor dependency.

Before final payment, verify that you have received not just code, but actual control: repository ownership, infrastructure access, deployment capability, documentation, security evidence, dependency inventory, and legal ownership. Test the handover by having someone who did not work on the project set up, deploy, and operate the system from the provided materials.

If any of these elements are missing, you are accepting risk that will cost money later. The time to address gaps is before sign-off, not after.